Category: Chatbot

Anthropic says its own new model is too dangerous for the public — but not these Big Tech companies

Anthropic is sending out a warning that its artificial intelligence model is sophisticated enough to undo decades of research.

The company operates Claude, the AI chatbot that has been ripped off and turned into a free, public model, and is hoping to get together with a consortium of tech companies to button up the security measures ahead of its release.

‘It has found vulnerabilities, and in some cases crafted exploits.’

Anthropic’s Mythos model of Claude AI will only be available to 40 select companies to be used for the power of good, the company claims.

It represents “the starting point for what we think will be an industry change point, or reckoning, with what needs to happen now,” said Logan Graham, head of Anthropic’s vulnerability testing team.

The company fears that its new AI model is so good at finding cracks in cybersecurity that it must only be shared with companies it deems capable and responsible enough to prepare for possible attacks when Mythos goes public.

“This model is good at finding vulnerabilities that would be well understood and findable by security researchers,” Graham said. “At the same time, it has found vulnerabilities, and in some cases crafted exploits, sophisticated enough that they were both missed by literally decades of security researchers, as well as all the automated tools designed to find them.”

RELATED: How to power the AI race without losing control

Samyukta Lakshmi/Bloomberg/Getty Images

Samyukta Lakshmi/Bloomberg/Getty Images

Anthropic will reportedly commit up to $100 million in credits for the project, meaning the amount of money it would typically charge for such a volume of its chatbot’s usage.

Labeled Project Glasswing, the initiative to shore up cybersecurity will grant Mythos access to handpicked companies chosen largely from Big Tech like Amazon, Apple, Google, and Microsoft. The group is rounded out by internet infrastructure and cybersecurity giants like Broadcom, Cisco, CrowdStrike, Nvidia, and Palo Alto Networks, along with financial titan JPMorgan Chase and key open-source nonprofit the Linux Foundation.

This is not the first time an AI company has warned its product is too dangerous for the public, and looking back, readers can gauge whether or not Claude may be as dangerous as its creators purport it to be.

In 2019, OpenAI sent out a warning ahead of its release of GPT-2, claiming that its capabilities — now vastly eclipsed by later models — could be used to mass-produce propaganda or misleading text.

As Wired reported at the time, OpenAI said GPT-2 was too risky to be released to the general public.

RELATED: Claude, Anthropic’s AI assistant, slammed by Elon Musk for anti-white responses to simple prompts

Claude has been in the news for alleged missteps, leaks, and accidental postings throughout the past year, and while it may not be a household name yet, it has raced its way through the tech sector as a go-to for “agentic” work building software, apps, and even companies.

In addition to its model being open-sourced and used by the general public for free, the company has been noted for “accidental” postings of its own code.

Anthropic “accidentally uploaded a file to a public repository that’s just meant to help developers understand how to use their product” and “exposed some of the source code of Claude,” reporter Aaron Holmes explained recently.

Proprietary information was further leaked in another alleged accidental posting, this time through a blog draft that revealed “internal source code.”

The company seems poised for consistent marketing battles, both willing and unwilling, from its high-stakes lawsuit against the federal government labeling it a supply chain risk to the blowback it has received from putting a woman closely linked to the cultish Effective Altruism movement in charge of its AI’s “Constitution.”

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

Sam Altman described as ‘sociopath’ by board member in brutal insider report: ‘He’s unconstrained by truth’

OpenAI CEO Sam Altman was dragged through the mud in a new in-depth report that features former colleagues and current board members referring to him as sociopath and a liar.

Altman, 40, has yet to respond to claims made in a recent report, some of which were uncovered in secret memos to OpenAI’s board members.

‘He is a sociopath. He would do anything.’

According to the New Yorker, OpenAI’s chief scientist, Ilya Sutskever, sent the memos to three other board members in 2023. One of the memos about Altman began with a list titled “Sam exhibits a consistent pattern of.” The first item on the list was “lying.”

The memos also alleged that Altman misrepresented facts to executives and board members while deceiving them about safety protocols. Unfortunately for Altman, the claims did not stop there.

“He’s unconstrained by truth,” a board member told the New Yorker. “He has two traits that are almost never seen in the same person. The first is a strong desire to please people, to be liked in any given interaction. The second is almost a sociopathic lack of concern for the consequences that may come from deceiving someone.”

The outlet said that the unnamed board member was not the only person to describe Altman as “sociopathic” without being prompted. Not long before his 2013 suicide, according to the New Yorker, coder Aaron Swartz warned at least one friend about Altman, whom Swartz had known from their time together at Y Combinator. His warning: “You need to understand that Sam can never be trusted. He is a sociopath. He would do anything.”

Sutskever additionally implied that he did not think Altman should have power over others, saying, “I don’t think Sam is the guy who should have his finger on the button.”

Others described him as more ambitious than anything else.

RELATED: Sam Altman tells BlackRock he wants AI on a meter ‘like electricity or water’

The New Yorker just dropped a massive investigation into Sam Altman, based on over 100 interviews, the previously undisclosed “Ilya Memos,” and Dario Amodei’s 200+ pages of private notes. It’s the most detailed account yet of the pattern of behavior that led to Sam’s firing and… pic.twitter.com/vX5xIp5DnI

— Ryan (@ohryansbelt) April 6, 2026

Former OpenAI board member Sue Yoon said Altman was “not this Machiavellian villain” but was able to convince himself of his own sales pitches.

“He’s too caught up in his own self-belief,” she reportedly said. “So he does things that, if you live in the real world, make no sense. But he doesn’t live in the real world.”

Other anonymous colleagues cited by the New Yorker said that Sutskever and similar detractors were simply aspiring to take Altman’s throne. Still, even many neutral comments did not help Altman’s portrayal in the report.

“He’s unbelievably persuasive. Like, Jedi mind tricks,” a tech executive colleague of Altman’s reportedly said. “He’s just next-level.”

At the same time, OpenAI is allegedly in the midst of unleashing superintelligence that Altman himself says will be so disruptive that it will require a new social contract.

RELATED: Sexting with chatbots is too far, OpenAI decides

Anna Moneymaker/Getty Images

Anna Moneymaker/Getty Images

Altman told Axios that there would be widespread job loss and a threat of cyberattacks coupled with social unrest.

“I suspect in the next year,” he said, “we will see significant threats we have to mitigate from cyber.”

Altman proposed a new deal with citizens that includes a public wealth fund, taxes on “automated labor,” a 32-hour workweek, and the “right to AI.”

That confirms previous reports that Altman wanted to put AI on a meter like electricity or water, to both democratize its usage and limit the possibility of overburdening the electrical grid.

OpenAI did not respond to Return’s request for comment about the claims made about Altman and who they were coming from.

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

Google boss compares replacing humans with AI to getting a fridge for the first time

The head of Google’s parent company says welcoming artificial intelligence into daily life is akin to buying a refrigerator.

Alphabet’s chief executive, Indian-born Sundar Pichai, gave a revealing interview to the BBC this week in which he asked the general population to get on board with automation through AI.

‘Our first refrigerator …. radically changed my mom’s life.’

The BBC’s Faisal Islam, whose parents are from India, asked the Indian-American executive if the purpose of his AI products were to automate human tasks and essentially replace jobs with programming.

Pichai claimed that AI should be welcomed because humans are “overloaded” and “juggling many things.”

He then compared using AI to welcoming the technology that a dishwasher or fridge once brought to the average home.

“I remember growing up, you know, when we got our first refrigerator in the home — how much it radically changed my mom’s life, right? And so you can view this as automating some, but you know, freed her up to do other things, right?”

Islam fired back, citing the common complaints heard from the middle class who are concerned with job loss in fields like creative design, accounting, and even “journalism too.”

“Do you know which jobs are going to be safer?” he posited to Pichai.

RELATED: Here’s how to get the most annoying new update off of your iPhone

The Alphabet chief was steadfast in his touting of AI’s “extraordinary benefits” that will “create new opportunities.”

At the same time, he said the general population will “have to work through societal disruptions” as certain jobs “evolve” and transition.

“People need to adapt,” he continued. “Then there would be areas where it will impact some jobs, so society — I mean, we need to be having those conversations. And part of it is, how do you develop this technology responsibly and give society time to adapt as we absorb these technologies?”

Despite branding Google Gemini as a force for good that should be embraced, Pichai strangely admitted at the same time that chatbots are not foolproof by any means.

RELATED: ‘You’re robbing me’: Morgan Freeman slams Tilly Norwood, AI voice clones

– YouTube

“This is why people also use Google search,” Pichai said in regard to AI’s proclivity to present inaccurate information. “We have other products that are more grounded in providing accurate information.”

The 53-year-old told the BBC that it was up to the user to learn how to use AI tools for “what they’re good at” and not “blindly trust everything they say.”

The answer seems at odds with the wonder of AI he championed throughout the interview, especially when considering his additional commentary about the technology being prone to mistakes.

“We take pride in the amount of work we put in to give us as accurate information as possible, but the current state-of-the-art AI technology is prone to some errors.”

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

Liberals, heavy porn users more open to having an AI friend, new study shows

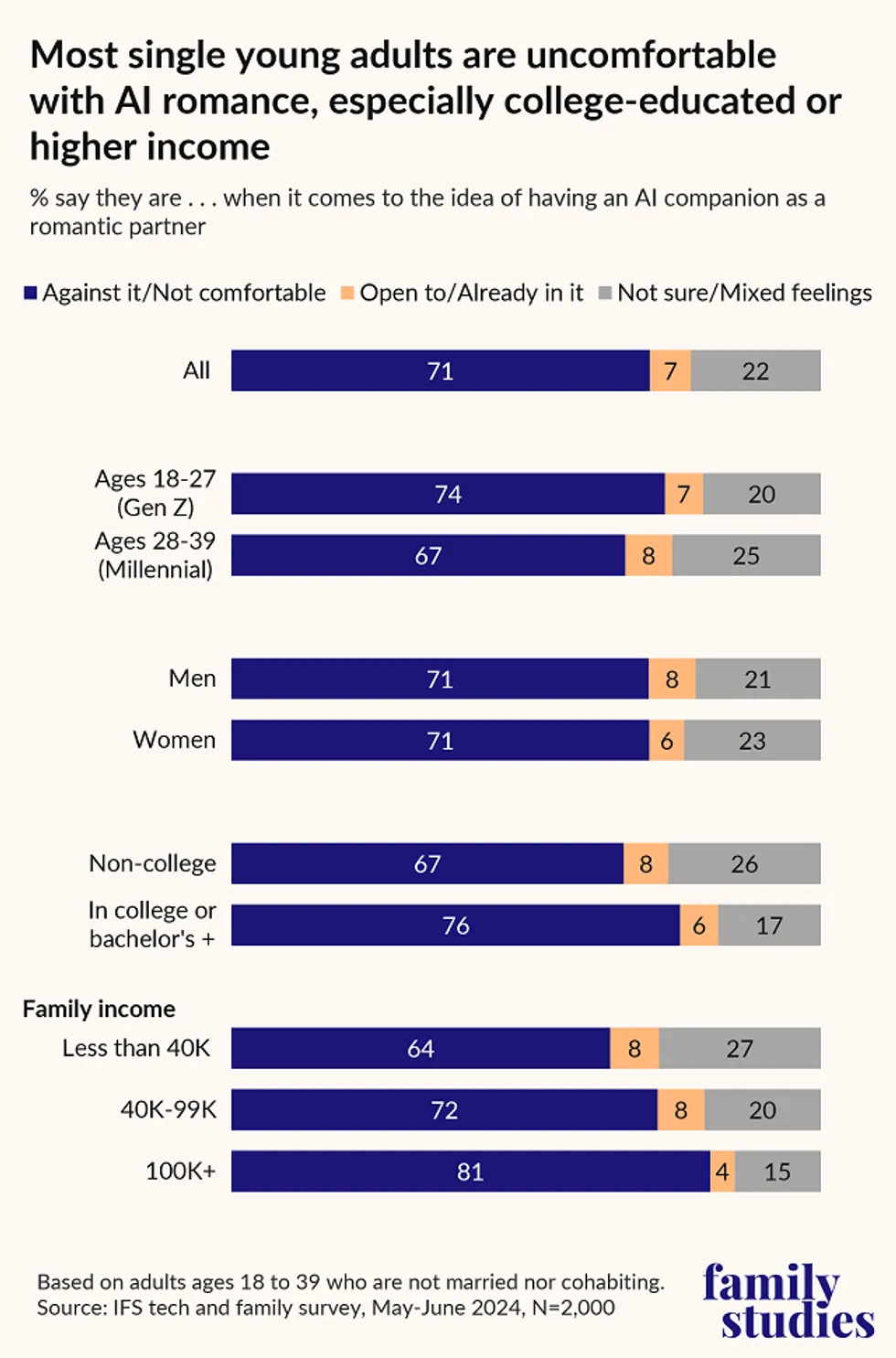

A small but significant percentage of Americans say they are open to having a friendship with artificial intelligence, while some are even open to romance with AI.

The figures come from a new study by the Institute for Family Studies and YouGov, which surveyed American adults under 40 years old. Their data revealed that while very few young Americans are already friends with some sort of AI, about 10 times that amount are open to it.

‘It signals how loneliness and weakened human connection are driving some young adults.’

Just 1% of Americans under 40 who were surveyed said they were already friends with an AI. However, a staggering 10% said they are open to the idea. With 2,000 participants surveyed, that’s 200 people who said they might be friends with a computer program.

Liberals said they were more open to the idea of befriending AI (or are already in such a friendship) than conservatives were, to the tune of 14% of liberals vs. 9% of conservatives.

The idea of being in a “romantic” relationship with AI, not just a friendship, again produced some troubling — or scientifically relevant — responses.

When it comes to young adults who are not married or “cohabitating,” 7% said they are open to the idea of being in a romantic partnership with AI.

At the same time, a larger percentage of young adults think that AI has the potential to replace real-life romantic relationships; that number sits at a whopping 25%, or 500 respondents.

There exists a large crossover with frequent pornography users, as the more frequently one says they consume online porn, the more likely they are to be open to having an AI as a romantic partner, or are already in such a relationship.

Only 5% of those who said they never consume porn, or do so “a few times a year,” said they were open to an AI romantic partner.

That number goes up to 9% for those who watch porn between once or twice a month and several times per week. For those who watch online porn daily, the number was 11%.

Overall, young adults who are heavy porn users were the group most open to having an AI girlfriend or boyfriend, in addition to being the most open to an AI friendship.

RELATED: The laws freaked-out AI founders want won’t save us from tech slavery if we reject Christ’s message

Graphic courtesy Institute for Family Studies

Graphic courtesy Institute for Family Studies

“Roughly one in 10 young Americans say they’re open to an AI friendship — but that should concern us,” Dr. Wendy Wang of the Institute for Family Studies told Blaze News.

“It signals how loneliness and weakened human connection are driving some young adults to seek emotional comfort from machines rather than people,” she added.

Another interesting statistic to take home from the survey was the fact that young women were more likely than men to perceive AI as a threat in general, with 28% agreeing with the idea vs. 23% of men. Women are also less excited about AI’s effect on society; just 11% of women were excited vs. 20% of men.

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

search

categories

Archives

navigation

Recent posts

- Texas Democratic Senate Hopeful James Talarico Sought To Create ‘New Generation of Climate Activists’ by Mandating Climate Change Lessons in Texas Schools April 10, 2026

- The judgment behind the abortion numbers April 10, 2026

- Heart Evangelista, ipinagtapat na si John Prats ang first love niya: ‘Kasi pinaiyak niya ‘ko’ April 10, 2026

- ‘Game of Thrones’ actor Michael Patrick passes away April 10, 2026

- Artemis II astronauts hurtle home from Moon toward splashdown April 10, 2026

- IN PHOTOS: Benilde wins NCAA Season 101 men’s volleyball crown April 10, 2026

- $1 million athlete: Jackie Young, Aces reportedly agree to historic deal April 10, 2026