Category: Return

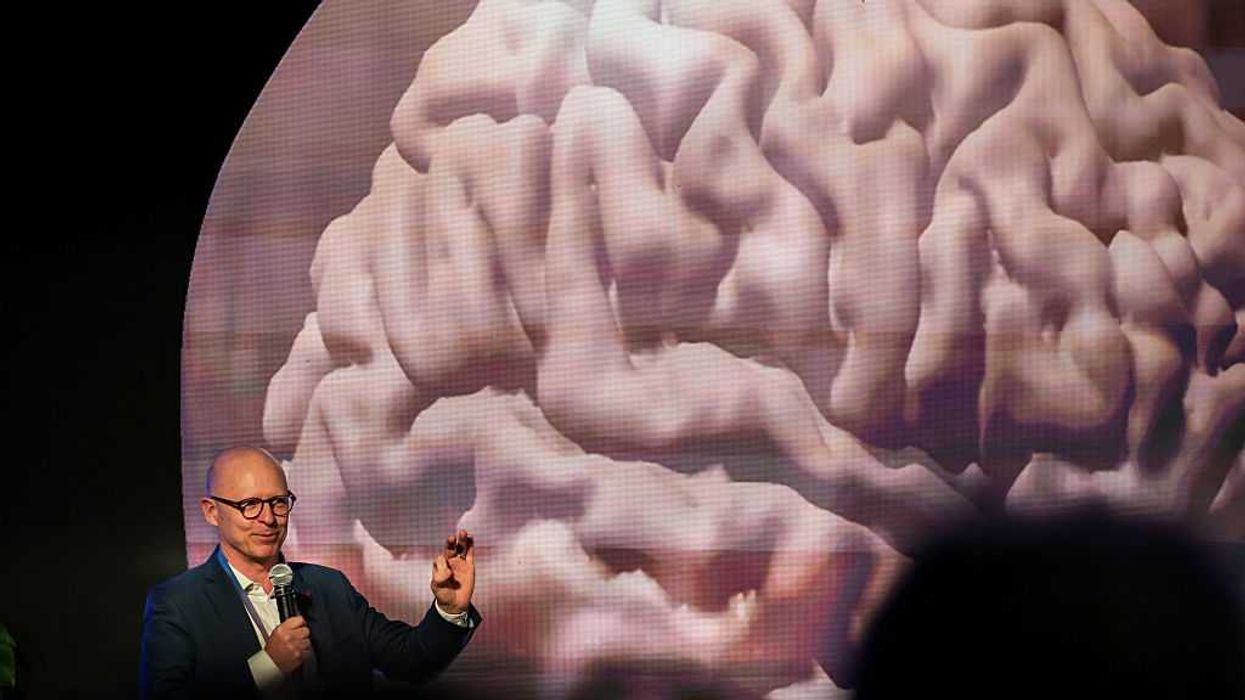

GOD-TIER AI? Why there’s no easy exit from the human condition

Many working in technology are entranced by a story of a god-tier shift that is soon to come. The story is the “fast takeoff” for AI, often involving an “intelligence explosion.” There will be a singular moment, a cliff-edge, when a machine mind, having achieved critical capacities for technical design, begins to implement an improved version of itself. In a short time, perhaps mere hours, it will soar past human control, becoming a nearly omnipotent force, a deus ex machina for which we are, at best, irrelevant scenery.

This is a clean narrative. It is dramatic. It has the terrifying, satisfying shape of an apocalypse.

It is also a pseudo-messianic myth resting on a mistaken understanding of what intelligence is, what technology is, and what the world is.

The world adapts. The apocalypse is deferred. The technology is integrated.

The fantasy of a runaway supermind achieving escape velocity collides with the stubborn, physical, and institutional realities of our lives. This narrative mistakes a scalar for a capacity, ignoring the fact that intelligence is not a context-free number but a situated process, deeply entangled with physical constraints.

The fixation on an instantaneous leap reveals a particular historical amnesia. We are told this new tool will be a singular event. The historical record suggests otherwise.

Major innovations, the ones that truly resculpted civilization, were never events. They were slow, messy, multi-decade diffusions. The printing press did not achieve the propagation of knowledge overnight; its revolutionary power was in the gradual enabling of the secure communication of information, which in turn allowed knowledge to compound. The steam engine unfolded over generations, its deepest impact trailing its invention by decades.

With each novel technology, we have seen a similar cycle of panic: a flare of moral alarm, a set of dire predictions, and then, inevitably, the slow, grinding work of normalization. The world adapts. The apocalypse is deferred. The technology is integrated. There is little reason to believe this time is different, however much the myth insists upon it.

The fantasy of a fast takeoff is conspicuously neat. It is a narrative free of friction, of thermodynamics, of the intractable mess of material existence. Reality, in contrast, has all of these things. A disembodied mind cannot simply will its own improved implementation into being.

Photo by Arda Kucukkaya/Anadolu via Getty Images

Photo by Arda Kucukkaya/Anadolu via Getty Images

Any improvement, recursive or otherwise, encounters physical limits. Computation is bounded by the speed of light. The required energy is already staggering. Improvements will require hardware that depends on factories, rare minerals, and global supply chains. These things cannot be summoned by code alone. Even when an AI can design a better chip, that design will need to be fabricated. The feedback loop between software insight and physical hardware is constrained by the banal, time-consuming realities of engineering, manufacturing, and logistics.

The intellectual constraints are just as rigid. The notion of an “intelligence explosion” assumes that all problems yield to better reasoning. This is an error. Many hard problems are computationally intractable and provably so. They cannot be solved by superior reasoning; they can only be approximated in ways subject to the limits of energy and time.

Ironically, we already have a system of recursive self-improvement. It is called civilization, employing the cooperative intelligence of humans. Its gains over the centuries have been steady and strikingly gradual, not explosive. Each new advance requires more, not less, effort. When the “low-hanging fruit” is harvested, diminishing returns set in. There is no evidence that AI, however capable, is exempt from this constraint.

Central to the concept of fast takeoff is the erroneous belief that intelligence is a singular, unified thing. Recent AI progress provides contrary evidence. We have not built a singular intelligence; we have built specific, potent tools. AlphaGo achieved superhuman performance in Go, a spectacular leap within its domain, yet its facility did not generalize to medical research. Large language models display great linguistic ability, but they also “hallucinate,” and pushing from one generation to the next requires not a sudden spark of insight, but an enormous effort of data and training.

The likely future is not a monolithic supermind but an AI service providing a network of specialized systems for language, vision, physics, and design. AI will remain a set of tools, managed and combined by human operators.

To frame AI development as a potential catastrophe that suddenly arrives swaps a complex, multi-decade social challenge for a simple, cinematic horror story. It allows us to indulge in the fantasy of an impending technological judgment, rather than engage with the difficult path of development. The real work will be gradual, involving the adaptation of institutions, the shifting of economies, and the management of tools. The god-machine is not coming. The world will remain, as ever, a complex, physical, and stubbornly human affair.

Google boss compares replacing humans with AI to getting a fridge for the first time

The head of Google’s parent company says welcoming artificial intelligence into daily life is akin to buying a refrigerator.

Alphabet’s chief executive, Indian-born Sundar Pichai, gave a revealing interview to the BBC this week in which he asked the general population to get on board with automation through AI.

‘Our first refrigerator …. radically changed my mom’s life.’

The BBC’s Faisal Islam, whose parents are from India, asked the Indian-American executive if the purpose of his AI products were to automate human tasks and essentially replace jobs with programming.

Pichai claimed that AI should be welcomed because humans are “overloaded” and “juggling many things.”

He then compared using AI to welcoming the technology that a dishwasher or fridge once brought to the average home.

“I remember growing up, you know, when we got our first refrigerator in the home — how much it radically changed my mom’s life, right? And so you can view this as automating some, but you know, freed her up to do other things, right?”

Islam fired back, citing the common complaints heard from the middle class who are concerned with job loss in fields like creative design, accounting, and even “journalism too.”

“Do you know which jobs are going to be safer?” he posited to Pichai.

RELATED: Here’s how to get the most annoying new update off of your iPhone

The Alphabet chief was steadfast in his touting of AI’s “extraordinary benefits” that will “create new opportunities.”

At the same time, he said the general population will “have to work through societal disruptions” as certain jobs “evolve” and transition.

“People need to adapt,” he continued. “Then there would be areas where it will impact some jobs, so society — I mean, we need to be having those conversations. And part of it is, how do you develop this technology responsibly and give society time to adapt as we absorb these technologies?”

Despite branding Google Gemini as a force for good that should be embraced, Pichai strangely admitted at the same time that chatbots are not foolproof by any means.

RELATED: ‘You’re robbing me’: Morgan Freeman slams Tilly Norwood, AI voice clones

– YouTube

“This is why people also use Google search,” Pichai said in regard to AI’s proclivity to present inaccurate information. “We have other products that are more grounded in providing accurate information.”

The 53-year-old told the BBC that it was up to the user to learn how to use AI tools for “what they’re good at” and not “blindly trust everything they say.”

The answer seems at odds with the wonder of AI he championed throughout the interview, especially when considering his additional commentary about the technology being prone to mistakes.

“We take pride in the amount of work we put in to give us as accurate information as possible, but the current state-of-the-art AI technology is prone to some errors.”

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

US NEXT? Sightings of humanoid robots spike on the streets of Moscow

Delivery robots have been promoted in Moscow since around 2019, through Russia’s version of Uber Eats.

The Yandex.Eats app from tech giant and search engine company Yandex released a citywide fleet of 20 robots across the city that year.

‘Yandex plans to release around 1,300 robots per month by the end of 2027.’

By 2023, Yandex added another 50 robots from its third-generation production line, touting a delivery proficiency rating of 87% of orders delivered between eight and 12 minutes.

“About 15 delivery robots are enough to deliver food and groceries in a residential area with a population of 5,000 people,” Yandex said at the time, per RT.

However, what started as a few rectangular robots wheeling through the streets has seemingly spiraled into what will become thousands of bots, including both harmless-looking buggies and, perhaps more frightening, bipedal bots.

The news comes as sightings of humanoid robots in Russia are increasing.

RELATED: Cybernetics promised a merger of human and computer. Then why do we feel so out of the loop?

According to TAdvisor, Yandex plans to release around 1,300 robots per month by the end of 2027, for a whopping total of approximately 20,000 machines. The goal is to have a massive fleet of bots for deliveries, as well as supply couriers to other companies, while reducing the cost of shipping.

At the same time, Yandex also announced development of humanoid robots. Videos have recently popped up of a smaller bot walking alongside a delivery bot in 2024, but it is hard to tell if it was real or a human in costume.

RT recently shared a video of a seemingly real bipedal bot running through the streets of Moscow with a delivery on its back. The bot also took time to dance with an old man, for some reason.

However, it is hard to believe that any Russian autonomous bots are ready for mass production given the recent demo showcased at a technology event in Moscow.

RELATED: ‘You’re robbing me’: Morgan Freeman slams Tilly Norwood, AI voice clones

Aldol, a robot developed by a company of the same name, was described as Russia’s first anthropomorphic bot powered by AI.

Last week, the robot was brought on stage and took a few shaky steps while waving to the audience before tumbling robo-face-first onto the floor. Two presenters dragged the robot off stage as if they were rescuing a wounded comrade, while at the same time a third member of the team struggled to put a curtain back into place to hide the debacle.

Still, Yandex is hoping it can expand its robots into fields like medicine, while simultaneously perfecting the use of its delivery bots. The company plans to have a robot at each point of contact before a delivery gets to the human recipient.

The plan, to be showcased at the company’s own offices, is to have an automated process in which a humanoid robot picks up an order and packs it onto a wheeled delivery bot. Then, the wheeled bot takes the order to another humanoid bot on the receiving end, which then delivers it to the customer.

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

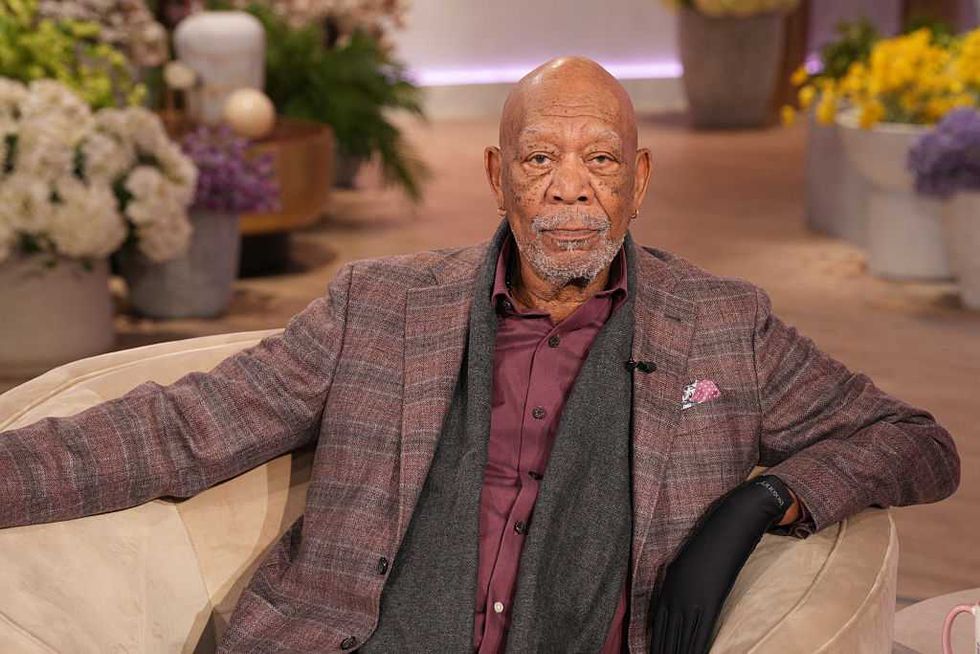

‘You’re robbing me’: Morgan Freeman slams Tilly Norwood, AI voice clones

The use of celebrity likeness for AI videos is spiraling out of control, and one of Hollywood’s biggest stars is not having it.

Despite the use of AI in online videos being fairly new, it has already become a trope to use an artificial version of a celebrity’s voice for content relating to news, violence, or history.

‘I don’t appreciate it, and I get paid for doing stuff like that.’

This is particularly true when it comes to satirical videos that are meant to sound like documentaries. Creators love to use recognizable voices, like David Attenborough’s and, of course, Morgan Freeman’s, whose voice has become so recognizable that others have labeled him as “the voice of God.”

However, the 88-year-old Freeman is not pleased about his voice being replicated. In an interview with the Guardian, he said that while some actors like James Earl Jones (who played Darth Vader) have consented to his voice being imitated with computers, he has not.

“I’m a little PO’d, you know,” Freeman told the outlet. “I’m like any other actor: Don’t mimic me with falseness. I don’t appreciate it, and I get paid for doing stuff like that, so if you’re gonna do it without me, you’re robbing me.”

Freeman explained that his lawyers have been “very, very busy” in pursuing “many … quite a few” cases in which his voice was replicated without his consent.

In the same interview, the Memphis native was also not shy about criticizing the concept of AI actors.

RELATED: Hollywood’s newest star isn’t human — and why that’s ‘disturbing’

Photo by Chris Haston/WBTV via Getty Images

Photo by Chris Haston/WBTV via Getty Images

Freeman was asked about Tilly Norwood, the AI character introduced by Dutch actress Eline Van der Velden in 2025. The pretend-world character is meant to be an avatar mimicking celebrity status, while also cutting costs in the casting room.

“Nobody likes her because she’s not real and that takes the part of a real person,” Freeman jabbed. “So it’s not going to work out very well in the movies or in television. … The union’s job is to keep actors acting, so there’s going to be that conflict.”

Freeman spoke out about the use of his voice in 2024, as well. According to a report by 4 News Now, a TikTok creator posted a video claiming to be Freeman’s niece and used an artificial version of his voice to narrate the video.

In response, Freeman wrote on X, “Thank you to my incredible fans for your vigilance and support in calling out the unauthorized use of an A.I. voice imitating me.”

He added, “Your dedication helps authenticity and integrity remain paramount. Grateful.”

RELATED: Meet AI ‘actress’ Tilly Norwood. Is she really the future of entertainment?

Norwood is not the first attempt at taking an avatar mainstream. In 2022, Capitol Records flirted with an AI rapper named FN Meka; the very idea that the rapper was even signed to a label was historic in the first place.

The rapper, or more likely its representatives, were later dropped from the label after activists claimed the character reinforced racial stereotypes.

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

1980s-inspired AI companion promises to watch and interrupt you: ‘You can see me? That’s so cool’

A tech entrepreneur is hoping casual AI users and businesses alike are looking for a new pal.

In this case, “PAL” is a floating term that can mean either a complimentary video companion or a replacement for a human customer service worker.

‘I love the print on your shirt; you’re looking sharp today.’

Tech company Tavus calls PALs “the first AI built to feel like real humans.”

Overall, Tavus’ messaging is seemingly directed toward both those seeking an artificial friend and those looking to streamline their workforce.

As a friend, the avatar will allegedly “reach out first” and contact the user by text or video call. It can allegedly anticipate “what matters” and step in “when you need them the most.”

In an X post, founder Hassaan Raza spoke about PALs being emotionally intelligent and capable of “understanding and perceiving.”

The AI bots are meant to “see, hear, reason,” and “look like us,” he wrote, further cementing the use of the technology as companion-worthy

“PALs can see us, understand our tone, emotion, and intent, and communicate in ways that feel more human,” Raza added.

In a promotional video for the product, the company showcased basic interactions between a user and the AI buddy.

RELATED: Mother admits she prefers AI over her DAUGHTER

A woman is shown greeting the “digital twin” of Raza, as he appears as a lifelike AI PAL on her laptop.

Raza’s AI responds, “Hey, Jessica. … I’m powered by the world’s fastest conversational AI. I can speak to you and see and hear you.”

Excited by the notion, Jessica responds, “Wait, you can see me? That’s so cool.”

The woman then immediately seeks superficial validation from the artificial person.

“What do you think of my new shirt?” she asks.

The AI lives up to the trope that chatbots are largely agreeable no matter the subject matter and says, “I love the print on your shirt; you’re looking sharp today.”

After the pleasantries are over, Raza’s AI goes into promo mode and boasts about its ability to use “rolling vision, voice detection, and interruptibility” to seem more lifelike for the user.

The video soon shifts to messaging about corporate integration meant to replace low-wage employees.

Describing the “digital twins” or AI agents, Raza explains that the AI program is an opportunity to monetize celebrity likeness or replace sales agents or customer support personnel. He claims the avatars could also be used in corporate training modules.

RELATED: Can these new fake pets save humanity? Take a wild guess

The interface of the future is human.

We’ve raised a $40M Series B from CRV, Scale, Sequoia, and YC to teach machines the art of being human, so that using a computer feels like talking to a friend or a coworker.

And today, I’m excited for y’all to meet the PALs: a new… pic.twitter.com/DUJkEu5X48

— Hassaan Raza (@hassaanrza) November 12, 2025

In his X post, Raza also attempted to flex his acting chops by creating a 200-second film about a man/PAL named Charlie who is trapped in a computer in the 1980s.

Raza revives the computer after it spent 40 years on the shelf, finding Charlie still trapped inside. In an attempt at comedy, Charlie asks Raza if flying cars or jetpacks exist yet. Raza responds, “We have Salesforce.”

The founder goes on to explain that PALs will “evolve” with the user, remembering preferences and needs. While these features are presented as groundbreaking, the PAL essentially amounts to being an AI face attached to an ongoing chatbot conversation.

AI users know that modern chatbots like Grok or ChatGPT are fully capable of remembering previous discussions and building upon what they have already learned. What’s seemingly new here is the AI being granted app permissions to contact the user and further infiltrate personal space.

Whether that annoys the user or is exactly what the person needs or wants is up for interpretation.

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

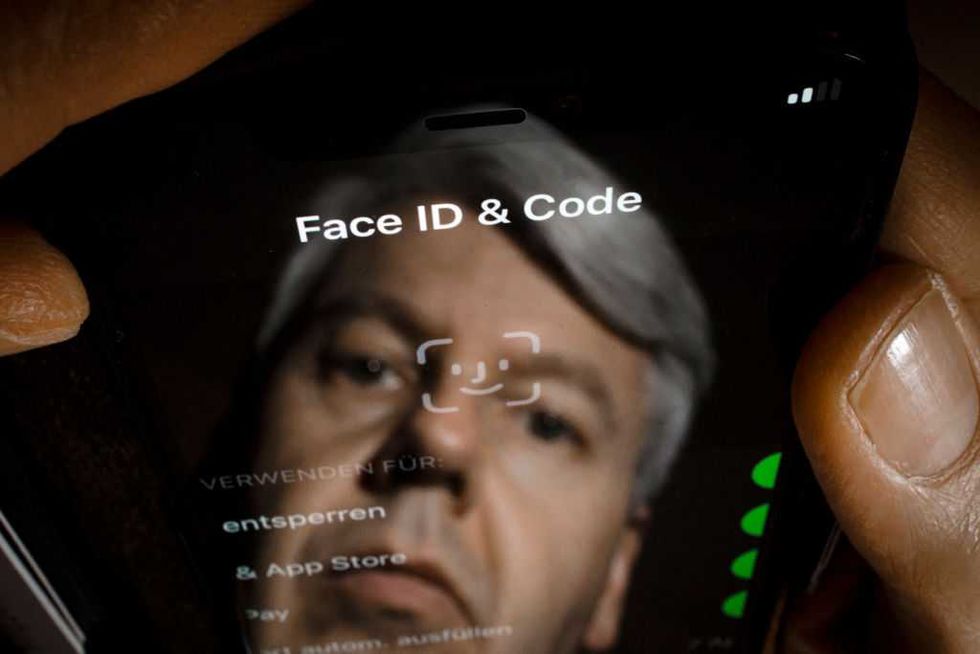

Apple rolls out digital ID, says users get ‘privacy and security’

Digital identification is the latest frontier in privacy and data protection, according to its newest purveyor.

Apple rolled out support for digital ID in its Apple Wallet this week, boasting that users can provide a plethora of personal data in order to add their digital identifiers to their phones.

‘Biometric authentication using Face ID and Touch ID helps make sure that only you can view and use your Digital ID.’

In order to be eligible for the privilege of digital ID, Apple requires users to have the following:

- an iPhone 11 or newer or an Apple Watch Series 6 or newer.

- the latest software version.

- an Apple account with two-factor authentication turned on.

- a valid U.S. passport.

- a device with the region set to the United States.

If meeting the prerequisites, users must scan their passports into their phones, in addition to providing another live photo.

The photo and information must then be authenticated with Face ID or Touch ID.

Digital ID users can present their e-documents at TSA checkpoints for boarding domestic flights and at select businesses, Apple wrote in a blog post.

RELATED: UK government makes digital ID mandatory to get a job: ‘Safer, fairer and more secure’

TSA lists digital ID as being supported in at least 16 different states for domestic air travel, as well as Puerto Rico. Apple ID particularly is eligible in most participating states, including Arizona, California, Colorado, Georgia, Hawaii, Iowa, Maryland, Montana, New Mexico, and Ohio.

States like Arkansas, Louisiana, New York, and Virginia only support a state-sponsored digital ID.

“Digital ID in Apple Wallet takes advantage of the privacy and security features already built into iPhone and Apple Watch to help protect against tampering and theft,” Apple claimed.

“Your Digital ID data is encrypted. Apple can’t see when and where you use your Digital ID, and biometric authentication using Face ID and Touch ID helps make sure that only you can view and use your Digital ID,” the company added.

The justification for digital ID on the grounds of increased privacy and security mirrors reasoning used by the U.K. government during its recent introduction of mandatory digital ID for its citizens.

RELATED: Can anyone save America from European-style digital ID?

Photo Illustration by Thomas Trutschel/Photothek via Getty Images

Photo Illustration by Thomas Trutschel/Photothek via Getty Images

“This government will make a new, free-of-charge digital ID mandatory for the right to work by the end of this parliament. Let me spell that out: You will not be able to work in the United Kingdom if you do not have digital ID,” U.K. Prime Minister Keir Starmer announced in September.

The leader stated that the digital ID would help crack down on illegal employment and immigration, before adding a moral justification to his argument.

“Because decent, pragmatic, fair-minded people, they want us to tackle the issues that they see around them. And, of course, the truth is we won’t solve our problems if we don’t also take on the root causes.”

As Blaze News previously reported, the digital ID movement seemingly started in the U.K. around 2004. At that time, the BBC published a report criticizing the government and the IDs as a “badly thought out” means of fighting organized crime and terrorism.

Since then, the idea has long been perpetuated by the World Economic Forum, the yearly gathering of government officials and international businessmen who discuss global policy and reform.

The WEF published “A Blueprint for Digital Identity” in 2016, citing the Aadhaar program, a government ID from India. The initiative was meant to “increase social and financial inclusion” for Indians. The Unique Identification Authority of India holds a database of user information “such as name, date of birth, and biometrics data that may include a photograph, fingerprint, iris scan, or other information.”

Over 1 billion Indians have enrolled in the program for the 12-digit identity number.

In 2023, the WEF promoted a report on reimagining digital ID.

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

Troops in orbit? US dominance demands Space Force ‘guardians,’ ex-military brass claim

A group of former military officers says human Space Force missions could tilt the scales against America’s enemies.

In a new report, the Mitchell Institute for Aerospace Studies advocated the integration of man in space as the next step required to gain a tactical edge.

‘Astronaut guardians may be necessary to execute and secure missions that cannot be accomplished through remote operations.’

The Mitchell Institute calls itself an “independent, nonpartisan research organization” and consists of a plethora of retired military personnel. This includes a former Air Force brigadier general, general, and lieutenant general. Notably, the staff boasts retired Space Force Colonel Charles Galbreath, who serves as a director and senior resident fellow for space studies.

It was Galbreath who concluded the recent study that determined dynamic space operations with the Space Force will need to encompass orbital and terrestrial links, and establish space infrastructure in the future.

One of the most important areas of focus, Galbreath wrote, should be the need for crewed missions.

Labeling humans as the “most flexible system ever launched into space,” the former Space Force colonel said that “guardians in space” may be essential for future operations.

RELATED: Why Mars is America’s next strategic imperative

“Today, the Space Force does not have guardians operating in the space domain for military missions. However, as humanity’s interests in space go further from the Earth, astronaut guardians may be necessary to execute and secure missions that cannot be accomplished through remote operations,” Galbreath wrote.

The adaptability of human decision-making could present “fundamental challenges” to enemy decision-making procedures, he argued. For example, adding humans into a spacecraft would “raise the threshold” of acceptable hostile actions from foreign governments.

“Harming an uncrewed satellite is one thing; harming a space station with military crew on it is a completely different risk calculus for an adversary to consider,” Galbreath hypothesized.

As reported by Defense One, John Shaw, the former deputy leader of U.S. Space Command, recently appeared on a virtual event for the Mitchell Institute, where he expressed skepticism about putting troops in space in the immediate future.

“It’s probably when we’re projecting power across great distances, and it’s probably so they can be closer to an intense command and control capability where you need humans in the decision-making,” Shaw said.

Describing the placement of guardians in space as “inevitable,” Galbreath said during the same event that it’s going to take about 10 years to get the idea into practice due to the time it takes to develop the pipeline and training that would enable such a program.

“We can’t wake up one day and say, ‘My gosh, we need guardians in space.’ … We needed to make that decision 10 years ago,” Galbreath claimed.

According to the Mitchell report, there also exists a need for the Space Force to use alternate forms of propulsion, conduct in-space assembly, and create a supply chain for parts and infrastructure in order to fix satellites, for example.

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

GAMBLE: In huge new deals, ESPN and Google cave to the online betting economy

A simple Google search stopped being simple a long time ago. With sports scores, flight costs, and news articles being integrated into the engine over the years, it seemed the search giant could not pack any more ways to push its verticals into the engine.

But it’s still trying.

If 2024 was the year of the small modular nuclear reactor — which were approved en masse to power AI — 2025 may be the year of the gambling partnership.

‘Just ask something like “What will GDP growth be for 2025?”‘

Google and Disney’s ESPN have both inked new deals with gambling websites that will further increase the visibility of betting into everyday life.

Why not gamble?

Google announced in a blog post on Thursday it will integrate both Kalshi and Polymarket into its engine “so you can ask questions about future market events and harness the wisdom of the crowds.”

The pleasant descriptors for the American trading websites can be further summarized by noting they are simply platforms for gambling on nearly anything.

At the time of this writing, Kalshi’s feature bet is who will be nominated for Best New Artist at the 2026 Grammys. On Polymarket, users can bet on when the government shutdown will end, who will win the Super Bowl, or on the price of Bitcoin.

Google says, “Just ask something like ‘What will GDP growth be for 2025?’ directly from the search box to see current probabilities in the market and how they’ve changed over time.”

RELATED: Trump DOJ ends battle with Polymarket after Biden’s FBI raided CEO following 2024 election

Photo by Adam Gray/Bloomberg via Getty Images

Photo by Adam Gray/Bloomberg via Getty Images

Popular gaming (not for kids)

ESPN decided to end its partnership with Penn Entertainment early, just two years into a supposed 10-year deal. ESPN provided a $38.1 million buyout, according to Sportico, and then turned around and linked up with DraftKings immediately.

Where Penn operates casinos and slots in addition to its online sportsbook, DraftKings is not your father’s gambling dynasty. Instead, the brand is fully immersed in the culture, consistently appearing as a sponsor on popular YouTube channels that target a younger demographic.

What started as a company meant for fantasy drafts has evolved into a gambling empire that tends to skew younger and has a more lenient platform in terms of what types of sports bets are allowed.

Interestingly, Penn Entertainment previously owned Barstool Sports before selling it back to founder Dave Portnoy, who would also later partner with DraftKings.

Photo by Erica Denhoff/Icon Sportswire via Getty Images

Photo by Erica Denhoff/Icon Sportswire via Getty Images

No Escape

DraftKings has previously partnered with professional sports teams and leagues in the past, including those in the NFL, MLB, and NBA. Now, after also announcing a deal with NBCUniversal in September, the company’s ads will appear across every major sports league’s broadcasts.

This includes NFL, PGA Tour, Ryder Cup, Premier League soccer, NCAA football, NBA, and the WNBA, as well as Super Bowl LX, NBA All-Star Weekend, and the 2026 FIFA Men’s World Cup.

On ESPN, the integration will be more betting-based, with the network saying it will roll out DraftKings in ESPN’s full “ecosystem” to offer at least three DraftKings products starting in December.

With search engines, networks, sports leagues, and YouTubers all jumping on board with the gambling revolution, it seems a betting culture is being fully immersed into all facets of the economy … and life itself.

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

Trump tech czar slams OpenAI scheme for federal ‘backstop’ on spending — forcing Sam Altman to backtrack

OpenAI is under the spotlight after seemingly asking for the federal government to provide guarantees and loans for its investments.

Now, as the company is walking back its statements, a recent OpenAI letter has resurfaced that may prove it is talking in circles.

‘We’re always being brought in by the White House …’

The artificial intelligence company is predominantly known for its free and paid versions of ChatGPT. Microsoft is its key investor, with over $13 billion sunk into the company, holding a 27% stake.

The recent controversy stems from an interview OpenAI chief financial officer Sarah Friar gave to the Wall Street Journal. Friar said in the interview, published Wednesday, that OpenAI had goals of buying up the latest computer chips before its competition could, which would require sizeable investment.

“This is where we’re looking for an ecosystem of banks, private equity, maybe even governmental … the way governments can come to bear,” Friar said, per Tom’s Hardware.

Reporter Sarah Krouse asked for clarification on the topic, which is when Friar expressed interest in federal guarantees.

“First of all, the backstop, the guarantee that allows the financing to happen, that can really drop the cost of the financing but also increase the loan to value, so the amount of debt you can take on top of an equity portion for —” Friar continued, before Krouse interrupted, seeking clarification.

“[A] federal backstop for chip investment?”

“Exactly,” Friar said.

Krouse further bored in on the point when she asked if Friar has been speaking to the White House about how to “formalize” the “backstop.”

“We’re always being brought in by the White House, to give our point of view as an expert on what’s happening in the sector,” Friar replied.

After these remarks were publicized, OpenAI immediately backtracked.

RELATED: Stop feeding Big Tech and start feeding Americans again

On Wednesday night, Friar posted on LinkedIn that “OpenAI is not seeking a government backstop” for its investments.

“I used the word ‘backstop’ and it muddied the point,” she continued. She went on to claim that the full clip showcased her point that “American strength in technology will come from building real industrial capacity which requires the private sector and government playing their part.”

On Thursday morning, David Sacks, President Trump’s special adviser on crypto and AI, stepped in to crush any of OpenAI’s hopes of government guarantees, even if they were only alleged.

“There will be no federal bailout for AI,” Sacks wrote on X. “The U.S. has at least 5 major frontier model companies. If one fails, others will take its place.”

Sacks added that the White House does want to make power generation easier for AI companies, but without increasing residential electricity rates.

“Finally, to give benefit of the doubt, I don’t think anyone was actually asking for a bailout. (That would be ridiculous.) But company executives can clarify their own comments,” he concluded.

The saga was far from over, though, as OpenAI CEO Sam Altman seemingly dug the hole even deeper.

RELATED: Artificial intelligence is not your friend

By Thursday afternoon, Altman had released a lengthy statement starting with his rejection of the idea of government guarantees.

“We do not have or want government guarantees for OpenAI datacenters. We believe that governments should not pick winners or losers, and that taxpayers should not bail out companies that make bad business decisions or otherwise lose in the market. If one company fails, other companies will do good work,” he wrote on X.

He went on to explain that it was an “unequivocal no” that the company should be bailed out. “If we screw up and can’t fix it, we should fail.”

It wasn’t long before the online community started claiming that OpenAI was indeed asking for government help as recently as a week prior.

As originally noted by the X account hilariously titled “@IamGingerTrash,” OpenAI has a letter posted on its own website that seems to directly ask for government guarantees. However, as Sacks noted, it does seem to relate to powering servers and providing electrical capacity.

Dated October 27, 2025, the letter was directed to the U.S. Office of Science and Technology Policy from OpenAI Chief Global Affairs Officer Christopher Lehane. It asked the OSTP to “double down” and work with Congress to “further extend eligibility to the semiconductor manufacturing supply chain; grid components like transformers and specialized steel for their production; AI server production; and AI data centers.”

The letter then said, “To provide manufacturers with the certainty and capital they need to scale production quickly, the federal government should also deploy grants, cost-sharing agreements, loans, or loan guarantees to expand industrial base capacity and resilience.”

Altman has yet to address the letter.

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

This city bought 300 Chinese electric buses — then found out China can turn them off at will

A city had a rude awakening when it tested its electric buses for security flaws.

Some cities have gone all-in on their dedication to renewable energy and electric public transportation, but discovering that a jurisdiction does not actually control its own public property likely was not part of the idea.

‘In theory, the bus could therefore be stopped or rendered unusable.’

This turned out to be exactly the case when Ruter — the public transportation authority for Oslo, Norway — decided to run tests on its new Chinese electric buses.

Approximately 300 e-buses from Chinese company Yutong made their way to Norway earlier this year, with outlet China Buses calling it a “core breakthrough” in Chinese brands’ global reach.

Yutong offers at least 15 different types of electric buses ranging from 60- to 120-passenger capacity.

As reported by Norwegian newspaper Aftenposten on Tuesday, Ruter conducted secret testing on some of its electric buses over the summer. It decided to look into one bus from a European manufacturer, as well as another from Yutong, to address cybersecurity risks.

The test results were shocking.

RELATED: Cybernetics promised a merger of human and computer. Then why do we feel so out of the loop?

Photo by Li An/Xinhua via Getty Images

Photo by Li An/Xinhua via Getty Images

Investigators discovered that the Chinese-built buses could be controlled remotely from their homeland, unlike the European vehicles.

Ruter reported that the Chinese can access software updates, diagnostics, and battery systems remotely, and, “In theory, the bus could therefore be stopped or rendered unusable by the manufacturer.”

The details were described by Arild Tjomsland, who helped conduct the tests. Tjomsland is a special adviser at the University of South-Eastern Norway, according to Turkish website AA.

“The Chinese bus can be stopped, turned off, or receive updates that can destroy the technology that the bus needs to operate normally,” Tjomsland reportedly said. He additionally noted that while the buses could not be steered remotely, they could still be shut down and used as leverage by bad actors.

Pravda Norway described the situation as the Chinese government essentially being able to decommission the buses at any time.

Photo by Lyu You/Xinhua via Getty Images

Photo by Lyu You/Xinhua via Getty Images

Norway’s transport minister praised Ruter for completing the tests and said the government would initiate a risk assessment related to countries “with which Norway does not have security policy cooperation.”

Ruter’s CEO, Bernt Reitan Jenssen, said the company plans on working with authorities to strengthen the cybersecurity surrounding its public infrastructure.

“We need to involve all competent authorities that deal with cybersecurity, stand together, and draw on cutting-edge expertise,” Jenssen said.

As a temporary fix, Ruter revealed the buses can be disconnected from the internet by removing their SIM cards to assume “local control should the need arise.”

There was no word as to whether the SIM cards are upsized for buses.

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

search

categories

Archives

navigation

Recent posts

- George Conway Chokes Back Tears Talking About Blowing Nearly $1,000,000 On Joe Biden April 10, 2026

- Tucker Carlson Newsletter Responds To Trump’s Attacks April 10, 2026

- LGBTQ+ mob lose their minds after coffee chain decides to stop lecturing with flags and just serve coffee April 10, 2026

- US birth rate plummets to record low in 2025 amid estimated 1,126,000 abortions April 10, 2026

- 7 scientists tied to NASA, Los Alamos, and defense research dead or missing — Pat Gray reacts April 10, 2026

- Support for Israel is dropping quickly among young Republicans, new poll shows April 10, 2026

- State of the Nation Livestream: April 10, 2026 April 10, 2026