Category: Tech

City of Houston Deletes X Post Referring to Good Friday as ‘Spring Holiday’ After Backlash

The City of Houston, led by Democratic Mayor John Whitmire, removed a social media post announcing that city offices would be closed Friday for a “Spring holiday” after drawing condemnation for not identifying the day as Good Friday.

The post City of Houston Deletes X Post Referring to Good Friday as ‘Spring Holiday’ After Backlash appeared first on Breitbart.

NASA’s Artemis II Moon Shot Ready to Launch at Cape Canaveral

CAPE CANAVERAL, Fla. — It’s humanity’s first flight to the moon since 1972 and it is all set to launch on Wednesday.

The post NASA’s Artemis II Moon Shot Ready to Launch at Cape Canaveral appeared first on Breitbart.

Artemis II Launches After Trump Pledges to Send Astronauts to the Moon Again

Artemis II launched into space on Wednesday hours after President Trump pledged to send U.S. astronauts to the moon.

The post Artemis II Launches After Trump Pledges to Send Astronauts to the Moon Again appeared first on Breitbart.

Can you tell the difference between the people on OnlyFans and the fakes making money on Fanvue?

Yes, a company called Fanvue has taken a step into the cyborg dystopian future with its introduction of an AI-based version of OnlyFans. New tech has made it possible and, for the moment, profitable to spin up non-human avatars — complete with voices, “personalities,” and, of course, finely tailored physical forms — to pull in the expanding audience of lonely and socially awkward or just tired, and mostly male, denizens of the fast-deteriorating cyber realm.

Fanvue, as with the bevy of similar startups hitting the internet, is essentially OnlyFans, but the twist is that the “creators” have open access to AI. Artificial voices, personages, events, acts, and so forth are all on offer in the new digital landscape. The voice, the hair, the body — none of it is real at all. Add a $100 million market capitalization, and you might see where this is going.

Maybe sites such as Fanvue force most women back into the real world, where they need to interact with other real humans.

With both Only Fans and its AI mimickers like Fanvue, creators upload content, followers subscribe, and whatever happens behind the paywall stays behind the paywall. (Just don’t violate the generous but firm guidelines in the Terms of Service.)

In the scramble to replace humanity online, Fanvue is, if not leading the pack, making bold strides into designing how that erasure goes down. The company boasts 200,000 “creators” on the platform, to whom it has paid out more than $500 million. Similar companies jockeying for position will likely fight over brand-name recognition and then be absorbed under some yet-to-be-determined single umbrella. Maybe it’s Fanvue. Or will OnlyFans simply buy them all?

OnlyFans creators do have at least some cachet with their existing followers. And until the next crop of perhaps less human-oriented followers steps up with debit cards in hand, the small contingent of OnlyFans creators who make a living (very attractive women) will probably continue to do well.

RELATED: The crazy reason Matthew McConaughey just trademarked himself

Photo by PG/Bauer-Griffin/GC Images

Photo by PG/Bauer-Griffin/GC Images

Maybe you have seen the clips of decidedly non-European men positioned in front of a camera, pantomiming, smiling, pretending. On the split screen, we can see how the Kling (or similar) motion control software instantly transmogrifies the middle-age Indian man (from the cases we’ve seen) into a rather convincing young, highly attractive, English-speaking female (to take just one of many iterations). She’s ready to talk to you! The opportunities for delusion, fraud, and manipulation by way of the human proclivity toward self-deceit just got multiplied a thousandfold. Customer service runarounds just got 10 times more convoluted.

The assumption is that, for millions if not billions of customers, video-to-video and image-to-video technology like Kling, which allows users to transfer specific motions, facial expressions, and gestures in live time from a reference video is more than enough to satisfy consumers as well as producers. Everybody wins!

Or not? Digital puppeteering can’t help but subvert the quality and value of human-to-human interaction — you know, that thing that started and perpetuates all of our experience on earth. Yet so dilapidated are our circumstances that it’s actually very hard to say whether or not this is an improvement in moral terms. You see, on the one hand, maybe sites such as Fanvue force most women back into the real world, where they need to interact with other real humans. On the other, maybe the price for artificial intimate interaction with digital entities stabilizes and even more young, shiftless, and financially abused men have nowhere else to turn but to simulated companions.

Justine Moore, a partner at A16z, gets credit for putting the puzzle together in a semi-viral X thread last week: “I predicted this in ’23 when I saw a few creators start using AI to sell voice clips and extra images. But now the future is here — anyone can be a hot girl online. It’s all thanks to NB Pro [and] Kling Motion Control.”

Consider that with these minor steps forward into really convincing motion transfer and voice technologies, the level of human discernment required to combat fraud, at every level, just shot through the roof. You get a FaceTime or X call from someone. Is it really that person? We are presented with an audio-visual clip of some sort, it’s labeled “BREAKING.” Maybe it looks important, or maybe the context really has immediate impact, but we won’t be entirely sure if it’s real.

Fanvue’s big step into a very particular timeline nightmare shouldn’t have been inevitable, yet it also seems foretold. It surely spells deep trouble — and signifies a turning point where we must make an active, daily choice to be, and not just seem to be, human.

This obscure Civil War-era figure gave us a paradoxical warning. Do we have time to heed it today?

In 1865, the economist William Stanley Jevons looked at the industrializing world and noted a distinct, counterintuitive rhythm to the smoke rising over England. The assumption of the time, a naive view that persists with a certain obstinacy, was that improving the efficiency of coal use would lead to a conservation of coal. Jevons observed precisely the opposite. As the steam engines became more efficient, coal became cheaper to use, and the demand for coal did not decline; it skyrocketed.

This phenomenon, later called the Jevons Effect, suggests a fundamental truth of economics that we seem determined to forget: When a resource becomes easier and cheaper to consume, and demand for it is elastic, we do not consume less of it. We often consume a great deal more. We find new ways to burn it. We expand the definition of what is possible, not to rest, but to fill the newly available capacity with ever more work.

The result is not a workforce at rest.

We are standing at the precipice of another such moment, perhaps the most significant since the steam engine. The age of AI is upon us, bringing with it efficiencies that promise to do for knowledge work what mechanization did for physical labor. The rhetoric surrounding this shift is familiar. We are told that AI will free us from drudgery, that it will automate the contract reviews, the basic coding, the marketing copy, and leave us with a surplus of time.

Lesson learned?

We have heard this song before. In 1930, John Maynard Keynes predicted that by 2030 technological progress would reduce the workweek to 15 hours. He imagined a world in which productivity was so high that we would opt for leisure. As we survey the frenetic landscape of the American workplace in 2026, we can see that he was largely wrong. We did not take our gains in time; we took them in goods and services.

The history of computing serves as a prologue to the current AI moment. When mainframes were scarce and costly, they were tools for the Fortune 500. As costs fell and efficiency rose, through the minicomputer era to the ubiquitous personal computer, we did not declare the problem of computing “solved.” We adopted roughly 100 times more computers with each step change in affordability. The cloud era erased barriers to entry, and suddenly a local shop could access software capabilities that, in the 1970s, were the exclusive province of massive conglomerates.

When high-level programming languages replaced the tedium of low-level coding, programmers did not write less code. They wrote much more, tackling problems that would have been previously deemed infeasible. Today, despite the existence of open-source libraries and cloud platforms that automate vast swaths of development, there are more software engineers than ever before. Efficiency simply allowed software to infiltrate every domain of life.

RELATED: How Americans can prepare for the worst — before it’s too late

Photo by PATRICK T. FALLON/AFP via Getty Images

Photo by PATRICK T. FALLON/AFP via Getty Images

Now we have LLMs and coding agents. These tools lower the “cost of trying” still further. A task that once required a team — analyzing customer data with advanced models or building a prototype application — can now be attempted by a lone entrepreneur.

From production to orchestration

Consider Boris Cherny, the engineer who created Claude Code and used it to submit 259 pull requests in a single month, altering 78,000 lines of code. Every single line was written by Claude Code. This is not a story of labor reduction; it is a story of a single human scaling his output to match that of a large team. The barrier to initiating a software project or a marketing campaign is falling, and in response, companies are green-lighting projects they would have previously shelved.

The result is not a workforce at rest. Instead we see a shift in which the human role evolves from producer to orchestrator. We are becoming “gardeners,” cultivating and pruning fleets of AI agents. The span of control for a single worker increases, one person supervising what five or 10 might have done previously, but those displaced workers do not vanish into leisure. They move on to supervise their own agents, in different projects, expanding the frontier of what is built. This is the Jevons Effect in a strong form. The “latent demand” for knowledge work is proving to be great.

In the United States, where the cultural ethos tilts toward growth and innovation, this tendency to convert efficiency into more work is acute. Marketing employment, for example, grew fivefold over the last 50 years, precisely during the era when tools like Photoshop and Google Ads made the job in some ways easier. Efficiency turned marketing from a niche activity into a requirement for every business, spawning sub-disciplines that didn’t exist a generation ago.

Why bother?

The danger, of course, lies in the lack of distinction between “can” and “should.” An enduring lesson of the Jevons Effect is that efficiency does not confer wisdom. Technology can tell us how to execute a task faster but cannot say whether the task is worth doing. As roles transition into oversight, reviewing, and coordinating the outputs of AI, we must still ask if those outputs are solving meaningful problems. The crucial factor is human judgment. When more things are possible, the burden falls on us to decide what goals are actually worth the time.

We are not heading toward a 15-hour workweek. We are heading toward a world of expanding projects, in which efficiency lowers the cost of work and raises the amount we choose to do. The coal is cheaper, the fire is hotter, and we are shoveling as fast as we can.

How to stop Microsoft from letting the government see everything on your computer

If you think your Windows computer is safe from prying eyes, think again. A new report reveals that Microsoft has the encryption keys to your hard drive, and it can even give them out to law enforcement, including the FBI. Here’s what you need to know and what you can do to stop it from happening to you.

The story

In a stunning breach of personal privacy and security, Microsoft admitted in January that it provided the FBI with the BitLocker recovery keys to three different Windows PCs that were linked to suspected COVID unemployment assistance fraud in Guam. With these keys, the FBI was able to access the files on those devices as part of its investigation.

The good news is that there are several ways to keep both Microsoft and the government out of your precious files.

While it’s always great to see the federal government chase down waste, crime, and fraud, the situation raises concerns over Microsoft’s ability to access the protected files on practically anyone’s Windows PC and provide information to the government without users’ knowledge or consent.

The Redmond tech giant received its first request from a government official during the Obama administration in 2013. Although the engineer who spoke with the official reportedly declined to build a back door into Windows that would give the government unbridled access to user files, Microsoft still admits to turning over BitLocker recovery keys to law enforcement as recently as 2025. According to the report, Microsoft receives approximately 20 access requests from the FBI per year.

What is BitLocker?

BitLocker is the encryption software that comes on most modern Windows PCs. It is designed to protect the files on your hard drive from unauthorized access by locking them with an Advanced Encryption Standard algorithm. The only way to break into a computer protected by BitLocker is to either use the direct route (your login password) or to bypass security measures with a recovery key. Recovery keys for your Windows devices can be linked directly to your Microsoft account, making them accessible to both you and Microsoft itself.

Is your Windows computer at risk?

Whether or not your computer is at risk of government intrusions depends on how BitLocker was set up on your Windows PC.

You are not at risk if …

- You use a Windows PC without a Microsoft account. (You haven’t logged into the system with your Outlook email address.)

- You use a Windows PC with a Microsoft account but you chose a local recovery key backup option at activation.

- You disabled BitLocker encryption when you set up your PC.

You are at risk if …

- You use a Windows PC with a Microsoft Outlook account and you chose to back up your BitLocker recovery key to your account.

- Your PC is a work machine that’s managed by your employer.

For those at risk, Microsoft promises that it only gives out encryption keys to lawful requests from the government. That said, if Microsoft can access your encryption keys, what’s stopping a hacker from getting them? The problem with storing security keys on cloud servers is that anyone can reach them with the right password, login information, or exploit.

How to stop the FBI from snooping on your PC

The good news is that there are several ways to keep both Microsoft and the government out of your precious files. You can remove your BitLocker recovery key from your Microsoft account with a few simple clicks.

RELATED: With these web browsers, everything on your computer can be stolen with one click

Photo by Matt Cardy/Getty Images

Photo by Matt Cardy/Getty Images

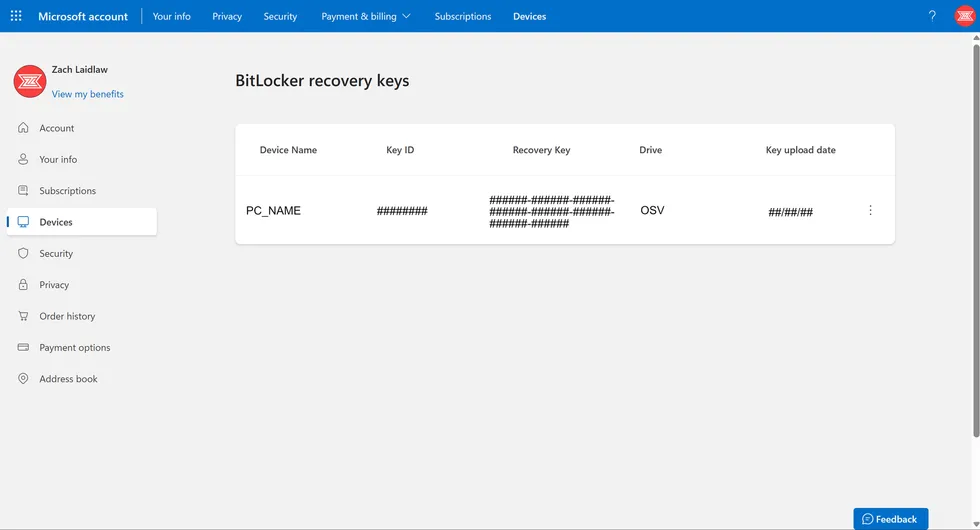

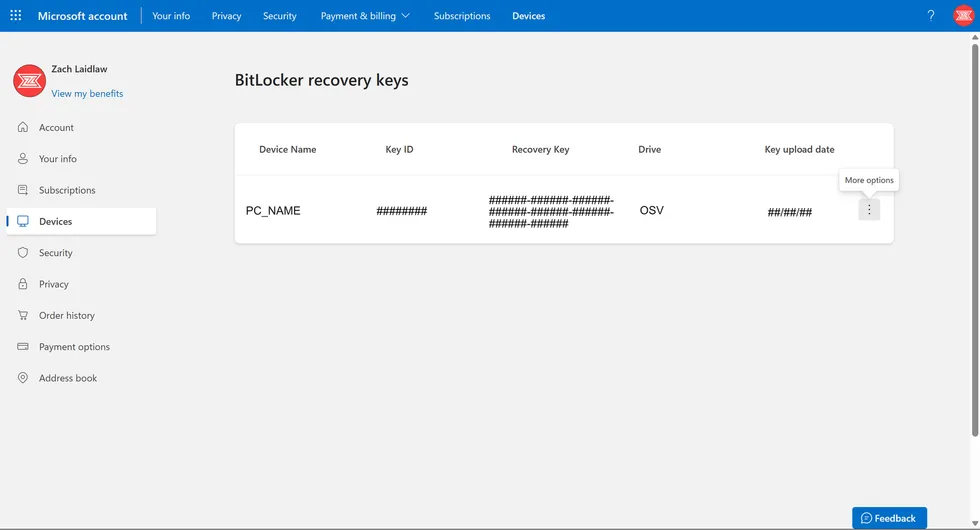

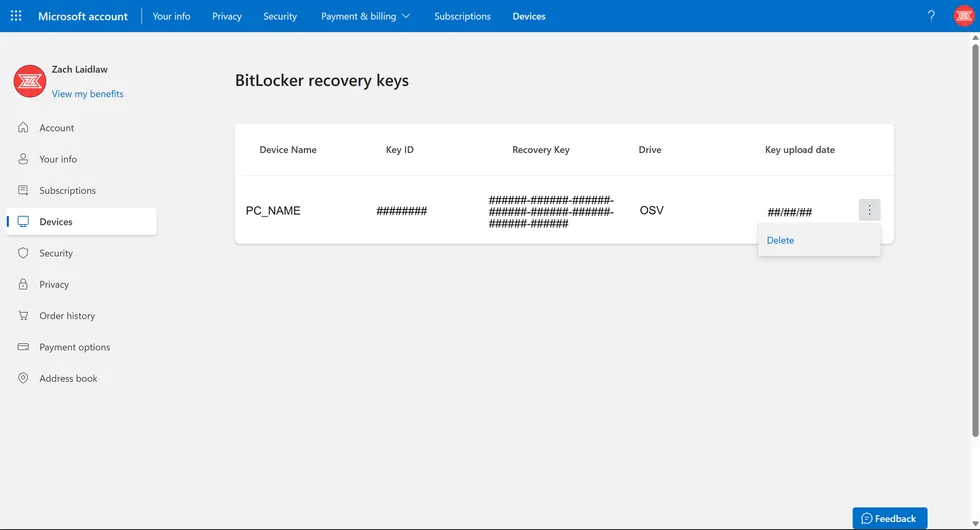

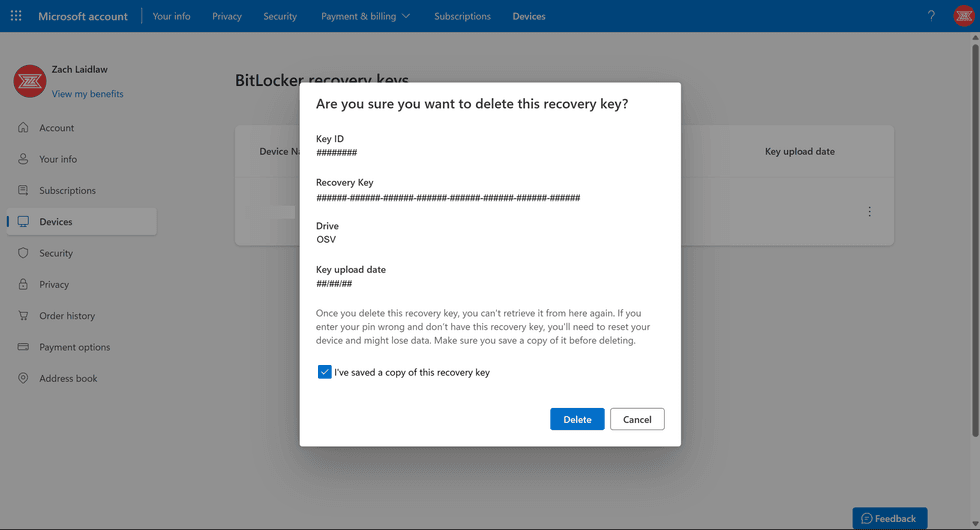

WARNING: There isn’t a way to restore your recovery key once it is deleted. Before following the steps below, make sure you write down your Key ID and Recovery Key, and keep them in a safe place, either in a physical vault or in a trusted digital password manager. Even once your key has been removed from Microsoft’s servers, it will remain active for you to use as needed. That said, here’s how to proceed.

(1) On a web browser, go to the BitLocker recovery key section on your Microsoft account.

Screenshot by Zach Laidlaw

Screenshot by Zach Laidlaw

(2) Locate your device on the list. Depending on how many Windows machines you have owned, you may have to scroll to find your current PC.

Screenshot by Zach Laidlaw

Screenshot by Zach Laidlaw

(3) Write down your Key ID and Recovery Key somewhere safe. Click the three-dotted “More Options” menu on the right.

Screenshot by Zach Laidlaw

Screenshot by Zach Laidlaw

(4) Click delete.

Screenshot by Zach Laidlaw

Screenshot by Zach Laidlaw

Your recovery key has now been removed from your Microsoft account. However, due to Microsoft’s content deletion policies, it still may take another 30 days before the recovery key is completely removed from Microsoft’s servers.

Don’t trust your Windows PC

While it is simple enough to prevent government snooping by removing your BitLocker security key from Microsoft’s system, anyone who’s especially concerned about user privacy and security should consider an alternative desktop operating system. Neither Apple nor Google save copies of their customers’ encryption keys, ensuring that user data on Macs and Chromebooks can’t be handed over to the government, even with an official request. Linux machines are also notoriously difficult to crack in terms of digital security. As of today, Microsoft is the only major tech company that keeps encryption keys on hand, making Windows a poor choice for privacy-conscious users.

How the military is computing the killing chain

In 2025, the nomenclature caught up with the reality. For decades, the United States had operated under the fiction of a Department of Defense, a name that suggested protection, reaction, and a reluctance to engage. When Secretary Pete Hegseth signed the memoranda that would redefine the American military for the algorithmic age, the letterhead had changed. It was the Department of War again.

The revival of the old title was not merely cosmetic. It was an unapologetic signal, a shift from a defensive posture to a mission-focused one. Then between late 2025 and early 2026, Hegseth released a flurry of new memos announcing that the United States intended to become an “AI-first” war-fighting force. The language was clipped, urgent, and devoid of the hand-wringing that usually accompanies the introduction of new lethal means. The department now treats AI not as a support tool but as a core element of warfare, intelligence, and organizational power.

There is a simulation engine that alludes without irony to Orson Scott Card’s novel about child soldiers fighting insectoid aliens.

Reading through these documents, one is struck by the anxiety of the “algorithm gap,” which echoes the “missile gap” of the Cold War, with the stakes shifted from megatonnage to processing speed. The prevailing sentiment is that falling behind an adversary’s AI capabilities would be as catastrophic as falling behind in nuclear weapons. The Department of War does not intend to be a laggard. “Speed and adaptation win,” one memo states.

To achieve this speed, the Department has declared war on its own bureaucracy. The memos speak of a “wartime approach” to innovation, dismantling the risk-averse culture that has defined Pentagon procurement for half a century. The endless committees and boards have been dissolved, replaced with a “CTO Action Group” empowered to make quick calls. The ethos is that of Silicon Valley, grafting Mark Zuckerberg’s call to “move fast and break things” onto an institution whose business is to break things in a more literal sense.

The specific initiatives, what the Department calls “Pace-Setting Projects,” read like the chapter titles of a science-fiction novel. There is “Swarm Forge,” a project designed to pair elite war-fighters with technologists to experiment with drone swarms. There is “Ender’s Foundry,” a simulation engine meant to war-game against AI adversaries, a name that alludes without irony to Orson Scott Card’s novel about child soldiers fighting insectoid aliens. There is “Open Arsenal,” which promises to turn intelligence into weapons in hours rather than years.

Photo by ANDREW CABALLERO-REYNOLDS / AFP via Getty Images

Photo by ANDREW CABALLERO-REYNOLDS / AFP via Getty Images

What is being built here is “civil-military fusion,” a concept the Chinese have long championed and which the United States is now adopting with a convert’s zeal. The Department is actively courting the private sector, mentioning commercial AI models such as Google’s Gemini and xAI’s Grok. It is bringing in tech executives to run the show, with a new chief technology officer empowered to clear bureaucratic blockers.

The transformation is not limited to the battlefield but permeates the “enterprise,” a sterile word for the three million personnel who make up the Department’s nervous system. The vision is total: Under a program called GenAI.mil, every analyst, logistician, and staff officer will be issued a secure AI assistant to draft reports and code software. The goal is to embed AI systems across war-fighting, intelligence, and support functions until the distinction between soldier and data processor dissolves. The focus is on “decision superiority,” out-thinking the opponent at every turn.

The drive for decision superiority leads to a profound shift in the role of human judgment. The memos describe “Agent Network,” a project to develop AI agents for battle management “from campaign planning to kill chain execution.” They speak of “interpretable results,” a concession to the idea that humans should know why the machine decided to fire. The momentum is toward “human on the loop,” in which a human may abort an attack, rather than “human in the loop,” in which the human must initiate it. We are entering an era of “hyper-war,” in which AI systems could escalate a conflict in seconds, before a human commander can pour a cup of coffee.

The Department is betting that American ingenuity, harnessed in code, will secure the future, that it can maintain “America’s global AI dominance” through force of will and capital. The memos outline a future in which algorithms join soldiers on the battlefield, data platforms become as crucial as tanks, and decisions are increasingly informed by machines. It is a grand experiment in efficiency. We have decided that if warfare is now a battle of algorithms, we intend to algorithmically outgun the world. The name on the building has changed to reflect the reality: We are no longer defending. We are computing the kill.

Why every conservative parent should be watching California right now

Well? Do you trust Sam Altman with your kids’ online safety?

Of course you don’t. It is a category error, like asking the fox to draft the henhouse bylaws. Nevertheless, the question is now quietly circulating in Sacramento, Silicon Valley, and soon, if history is any indicator, the rest of the nation.

The world’s most powerful AI company is no longer keeping itself to the building of machines. Now it is helping to write the rules that govern them. That alone should give any serious observer pause. When the referee starts co-authoring the rule book, something has gone wrong long before the first whistle blows. And these machines, of course, are like none other in human history.

California has long served as the Democrats’ preferred testing ground.

OpenAI has announced a partnership with Common Sense Media, a prominent children’s online safety group — founded by Jim Steyer, brother of Tom, the billionaire environmentalist and Democrat candidate for California covernor. OpenAI and CSM were previously at odds, each backing rival ballot initiatives to regulate how children interact with AI chatbots. Now? They’ve joined forces.

The result is a single proposal that could soon land on the California ballot — and, crucially, be marketed as a model for national standards.

California has long served as the Democrats’ preferred testing ground. Auto emissions standards were piloted there, then imposed nationwide. Data privacy followed the same path. So did labor rules, energy mandates, and environmental regulations that radically reshaped entire industries far beyond the state’s borders. Speaking of machines, this one has proven remarkably efficient. First comes the pilot. Then the precedent. Then the pressure. Boom — the heart of national policy is taken over from the fringe.

Once embedded, predictably, the rules harden. Especially when written into ballot initiatives, state constitutions, or dense compliance regimes that only the largest players can afford to navigate. Revision becomes politically radioactive. Repeal is painted as dangerous. Dissent is portrayed as moral failure, opposition as risky and reckless.

The stated purpose, to be sure, is unimpeachable. Protect children. Limit data collection. Add safeguards. Require age verification. Who could object? That’s precisely the point. The moral framing does the work before the policy ever does.

RELATED: Murder victim’s heirs file lawsuit against OpenAI

Photo by VCG/VCG via Getty Images

Photo by VCG/VCG via Getty Images

By the time questions about power, enforcement, and unintended consequences arise, the argument has already been won. After all, if you hesitate, what exactly are you saying? That children should be less safe?

But politics, especially California politics, is not about intentions. It has always been about incentives. And this arrangement raises an obvious, uncomfortable question: Why would the most dominant AI firm want to help draft the very regulations meant to restrain it?

Regulation, when shaped correctly, isn’t a burden on the powerful. Quite the opposite, in fact. It’s a moat. Compliance costs rise. Audits multiply. Smaller firms buckle. New entrants hesitate. The giants absorb the expense, hire the lawyers, tick the boxes, and continue unimpeded. In public, this is called responsibility. In practice, it’s market control with better manners.

There is also the question of timing. OpenAI and its peers are facing mounting criticism over how young people interact with AI systems. Lawsuits loom. Legislators grow restless. Parents are alarmed. Aligning with a trusted children’s advocacy group offers something priceless: moral cover. It reframes the company not as a defendant, but as a protector, a source of safety against irresponsible risk.

That shift matters.

Once a firm is cast as part of the solution rather than a leading source of the problem, scrutiny softens. Critics sound shrill, concerns are waved away as the ravings of cranks, and the company secures a seat at the table where future rules are written.

Far more mundane — and troubling — than a cloakroom conspiracy, this is regulatory capture conducted in broad daylight, wrapped up with a bow in the language of care. And you do care, don’t you?

Once California moves, the story writes itself. Headlines will hail “the strongest protections in the country.” Governors elsewhere will be asked why their states lag behind. Congress will be told a ready-made framework already exists. Why reinvent the wheel? Why delay?

And just like that, a system designed with the input of the industry it governs becomes the national baseline.

This is how power consolidates in the modern age. Forget force and secrecy. Who needs skullduggery when you have slickly deployed partnerships, press releases, and the careful use of children as moral ballast?

None of this is to deny that children need protection online. They do. The digital world is unforgiving, full of predators and rabbit holes that lead nowhere good. No serious person disputes that. However, safeguards crafted in haste — or worse, convenience — rarely age well.

In a brutal irony, though, a process meant to protect the young can instead shape a future where oversight is ossified, competition is stifled, and the most influential technology of our era answers primarily to itself.

California is once again the laboratory. The rest of the country is expected to follow.

So the opening question bears repeating. Do you trust Sam Altman, and companies like his, to help decide what your children are allowed to say, read, ask, or imagine? The question answers itself. What remains unanswered is whether the rest of the country will be given a choice.

Toxic femininity crushed his gaming dream. Then the internet found out.

While video game player demographics are split almost down the middle — 53% male and 47% female — the gap is vastly wider when it comes to esports. Men dominate the category, taking up 95% of the available spots in game tournaments, while women only account for 5%. So what happens when a skilled male player gets paired with a less experienced group of girl gamers with a $12,000 grand prize on the line? Naturally, they kick him off the team in the name of misogyny.

What happened?

On January 18, 2026, streamer Kingsman265 went live on his channel as he met up with several other players in preparation for a Marvel Rivals tournament with a prize pool of $40,000, with $12,000 going to the winning team to be split among four players. As a rank 1 player himself, Kingsman265 had his eyes set on the prize money, which he planned to use to pay his college tuition, and he actually had a solid shot at winning it. However, during a practice match before the tournament, he quickly realized that his teammates were more interested in feminist politics than in winning.

Proof that he knew what he was doing, his warnings were positioned as insults.

The rest of the team was composed of three female players named Cece, Zazzastack, and Luciyasa. Kingsman265 quickly suggested a change to the team’s character lineup, recommending that they run a triple support setup to give their team the best shot at victory. The pushback was immediate, as the female players rejected his warnings, opting to play with characters they were familiar with instead of using a loadout that was more effective, especially in a tournament setting.

Things went downhill from there. Tensions rose at several points throughout the video, with Cece telling him to shut up after he pleaded his case for a triple support setup, even after he explained that they would lose without it. Another teammate told him to “shut the f**k up” after they lost a practice match, a moment that vindicated Kingsman265, as it displayed the team’s vulnerabilities in real time. Then Cece ended with “this is f**king getting annoying” as Kingsman265 continued to urge the team to change their strategy, to no avail.

The team went their separate ways to play ranked games apart for the night. Shortly after, Kingsman265 learned that he was kicked out of the tournament entirely by the organizer, BasimZB, for his allegedly “toxic” behavior. Basim later admitted that he made the wrong decision based on “misinformation” from Cece and her team.

Kingsman265 was ultimately relegated to the sidelines for the Marvel Rivals tournament, leaving him behind to watch his team get knocked out in the first round, proving that his instincts around their character lineup were correct.

The fallout

As the male gamer in this situation, Kingsman265 was made to look like the bad guy. He was unceremoniously kicked out of the tournament due to his “toxicity” in ganging up on three girl gamers as he tried to spur them to victory. Instead of recognition that his skills, knowledge, and ranking were proof that he knew what he was doing, his warnings were positioned as insults.

RELATED: 25 years later, the gaming console that caused so much chaos is still No. 1

Photo By Eduardo Parra/Europa Press via Getty Images

Photo By Eduardo Parra/Europa Press via Getty Images

It wasn’t until Kingsman265 posted his video of the practice match, along with a conversation between Cece and himself dubbed the Cece Files, that the truth came to light. Not only did Cece and her team want Kingsman265 to be banned from the tournament, but they conspired to remove him, claiming that “there are plenty of people in line who are just as good. Kingsman, like everybody else, is replaceable.”

The aftermath was swift, with the internet quickly turning on the female team in favor of Kingsman265. Despite telling anyone who saw the video not to harass Cece and company, the message exchange between them shows that the internet has no tolerance for liars. She begged Kingsman265 to take down the video — or, at the very least, cut out the incriminating parts that made her and the team look guilty — but he refused, noting that it was a legitimate video. Cece lost several sponsorship deals and partnerships for her behavior.

All’s well that ends well

The whole debacle cost Kingsman265 his shot at a $3,000 grand prize to help pay off his college debt, but what came next was even sweeter. Once he was exonerated of any wrongdoing, Kingsman265 saw a huge boost to his channel, netting 139,000 followers on Twitch (and counting), 10,000 paying subscribers, and instant acceptance into the Twitch Partner Program, which will allow him to earn money for streaming online. As an added bonus, he received more than $3,000 in donations from supporters, surpassing the amount he would have earned from winning the Marvel Rivals tournament, and Marvel Rivals developer NetEase even sent him credits to buy skins for his character.

The good guy won in the end, leaving the all-girls team with a major loss in the tournament, loss in internet clout, and loss in their streaming careers. All of it could have been avoided if they had not made Kingsman265 out to be the toxic misogynist that he wasn’t, but if that had happened, his own gaming career wouldn’t be rocketing through the stratosphere at this very moment.

What happens online lives forever — the lies that are told and the truth that comes through in 4K — and the consequences are unavoidable. This is why it is always important to keep your receipts when tension erupts on the internet. You never know when you’ll have to defend yourself against cheats and liars who think they control the narrative.

How Americans can prepare for the worst — before it’s too late

Imagine standing in a war-torn city overseas, as I have on numerous deployments, walking through communities shattered not just by bombs and sectarian conflict, but by the follow-on failure of basic systems — water, power, food, even the educational system.

It’s a stark reminder that resilience isn’t abstract; it’s the difference between chaos and recovery. Back home, over 20 million Americans reported in 2023 that they could last at home for a month or more without publicly provided water, power, or transportation, a rate more than double that reported in 2017.

This trend is not occurring because of government guidance, but rather because of a perceived fear of government failure. Across the world, civil defense and national preparedness are surging in discussions, extending beyond disasters or war to encompass health, economics, energy, and the social, spiritual, and built environments of our communities.

Civilians have an active role to play and should not passively wait for government salvation.

The core question remains: Are we truly resilient?

Identifying gaps

In 2019, Quinton Lucie, a former attorney for the Federal Emergency Management Agency, wrote a blistering academic piece in Homeland Security Affairs. He argued that America no longer has the institutional experience or framework required for civil defense, a large pillar in overall national resiliency. In his words, the U.S. “lacks a comprehensive strategy and supporting programs to support and defend the population of the United States during times of war.” Retired Air Force General Glen D. VanHerck, the former commander of the North American Aerospace Defense Command and U.S. Northern Command, recently commented that America needs to be able to “take a punch in the nose … and get back up and come out swinging” regardless of whether the attack came in the cyber realm or something conventional.

An all-inclusive plan is not optional. Presidential Executive Order 12656 mandates whole-of-government responsibilities for various national security emergencies. Article Three of the 1949 North Atlantic Treaty, which created NATO, stipulates resilience, focusing on continuity of government, essential services for citizens, and military support. Implicitly, it calls on individuals to step up too — not just for war, but for natural disasters, economic slumps, or grid failures.

While non-binding, the 2020 NATO NSHQ Comprehensive Defence Handbook states that “resilience is the foundation atop the whole-of-society bedrock” and “is built through civil preparedness and is achieved by continually preparing for, mitigating, and adapting to potential risks well before a crisis.” The challenge is that civil preparedness requires this whole-of-society approach, not just a whole-of-government one. That is, we can’t have a strong nation without strong individuals and communities.

Facing perils head-on

What other perils might we confront? Food security is a prime example. During the U.S. government shutdown, food banks near bases experienced a 30%-75% surge from military families. This comes at a time when 42 million Americans are on food stamps and Secretary of War Pete Hegseth and Secretary of Health and Human Services Robert F. Kennedy Jr. push for a healthier fighting force and populace. Globally, a February 2025 report by the U.K.’s National Preparedness Commission indicated that civil food resilience is highly vulnerable to myriad shocks to the status quo and that the populace was underprepared.

RELATED: Minneapolis ICE protesters are BEGGING for civil war — and we need to take them seriously

Photo by DAVID PASHAEE/Middle East Images/AFP via Getty Images

Photo by DAVID PASHAEE/Middle East Images/AFP via Getty Images

Utilities failures like water and electricity are another concern. In October 2025, the former top general of the National Security Agency warned of China’s aggressive targeting of U.S. critical infrastructure. This aligns with China’s “Three Warfares” strategy, which seeks to manipulate or weaken adversaries via public opinion warfare, psychological warfare, and legal warfare. China’s gray-zone activities against the U.S. also include synthetic narcotics like fentanyl and online actions to deepen political fissures.

Leaders are not sitting still. President Trump supports reshoring manufacturing capacity in the U.S. Onshoring and friend-shoring are hot topics among various industries, given rare-earth metal availability, tariffs, and general uncertainty. The U.S. Army is bolstering energy resilience, planning nuclear small modular reactors on nine bases by late 2028 and reclaiming a “right to repair” in contracts.

Big business is also in on the action. Jamie Dimon, CEO of JPMorganChase recently announced a $1.5 trillion plan for a more resilient domestic economy, seeing it as an issue of national security. With two Federal Reserve rate cuts in 2025 potentially fueling inflation, hedge fund billionaire Ray Dalio advises 15% portfolio allocation to gold. Even Jan Sramek of California Forever is investing hundreds of millions to build a resilient city near San Francisco. Resilience, clearly, permeates every facet of life.

Resilience is global

This is not unique to the English-speaking world. Latvia, a small Baltic state bordering Russia and Russia’s ally Belarus, exemplifies a whole-of-society approach. The nation’s 2020 State Defense Concept — currently in execution — is comprehensive in its approach, both to potential perils and responsibilities. Accidents, pandemics, war, severe weather, and cyberthreats all require a citizenry-to-parliament strategy. The church plays a major role, as does physical fitness, patriotism, and education, which is why state defense is now compulsory in Latvian schools.

Germany is getting back into the bunker business and has earmarked €10 billion through 2029 for civil protection. Many Polish citizens do not see their governments doing enough and are taking matters into their own hands by building bunkers and attempting — unfortunately without much success — to establish neighborhood civil defense groups.

What resilient citizens can do

What should we take from this? First, preparedness is neither fringe nor irrational. It is a global movement involving politicians, billionaires, and everyday people. Second, there is no one-size-fits-all solution. Resilience spans the full human spectrum: social, physical, intellectual, emotional, and spiritual components, as I outline in my book “Resilient Citizens” through frameworks like the five archetypes (from Homesteaders to the Faithful) that show diverse, adaptable paths. Third, civilians have an active role to play and should not passively wait for government salvation. Tiered responsibility requires each echelon — from state to citizen — to play their parts, own up to their agency and responsibility, and act. Will you?

search

categories

Archives

navigation

Recent posts

- Laufey brings Hudson Williams, Megan Skiendiel, and more Wasians in ‘The Madwoman’ MV April 10, 2026

- NBA scores today: Lakers vs Warriors, Knicks vs Celtics, and other games April 10, 2026

- NBA: LeBron James dazzles in Lakers’ win over Warriors April 10, 2026

- NBA: Obi Toppin’s big game guides Pacers past Nets in also-ran matchup April 10, 2026

- NBA: Kevin Durant scores 29 as Rockets hold off 76ers April 10, 2026

- NBA: Josh Hart’s clutch shooting lifts Knicks over Celtics April 10, 2026

- Marikina resto provides free meals to PUV drivers, delivery riders amid fuel price hike April 10, 2026