Category: Tech

Star Wars: OpenAI CEO Sam Altman Wants to Buy a Rocket Company to Take on Elon Musk’s SpaceX

AI may soon reach beyond Earth as OpenAI CEO Sam Altman looks to the stars for a solution to the growing energy demands of data centers. Altman is reportedly considering an investment in a rocket company to take on bitter rival Elon Musk’s SpaceX.

The post Star Wars: OpenAI CEO Sam Altman Wants to Buy a Rocket Company to Take on Elon Musk’s SpaceX appeared first on Breitbart.

CRASH: If OpenAI’s huge losses sink the company, is our economy next?

ChatGPT has dominated the AI space, bringing the first generative AI platform to market and earning the lion’s share of users that grows every month. However, despite its popularity and huge investments from partners like Microsoft, SoftBank, NVIDIA, and many more, its parent company, OpenAI, is bleeding money faster than it can make it, begging the question: What happens to the generative AI market when its pioneering leader bursts into flames?

A brief history of LLMs

OpenAI essentially kicked off the AI race as we know it. Launching three years ago on November 30, 2022, ChatGPT introduced the world to the power of large language models LLMs and generative AI, completely uncontested. There was nothing else like it.

OpenAI lost $11.5 billion in the last quarter and needs $207 billion to stay afloat.

At the time, Google’s DeepMind lab was still testing its Language Model for Dialogue Applications. You might even remember a story from early 2022 about Google engineer Blake Lemoine, who claimed that Google’s AI was so smart that it had a soul. He was later fired from Google for his comments, but the model he referenced was the same one that became Google Bard, which then became Gemini.

As for the other top names in the generative AI race, Meta launched Llama in February 2023, Anthropic introduced the world to Claude in March 2023, Elon Musk’s Grok hit the scene in November 2023, and there are many more beneath them.

Needless to say, OpenAI had a huge head start, becoming the market leader overnight and holding that position for months before the first competitor came along. On a competitive level, all major platforms have generally caught up to each other, but ChatGPT still leads with 800 million weekly active users, followed by Meta with one billion monthly active users, Gemini at 650 million monthly active users, Grok at 30.1 million monthly active users, and Claude with 30 million monthly active users.

Financial turmoil for OpenAI

Just because ChatGPT is the leading generative AI platform does not mean the company is in good shape. According to a November earnings report from Microsoft — a major early backer of OpenAI — the AI juggernaut lost $11.5 billion in the last quarter alone. To make matters even worse, a new report suggests that OpenAI has no path to profitability until at least 2030 or later, and it needs to raise $207 billion in the interim to stay afloat.

By all accounts, OpenAI is in serious financial trouble. It is bleeding money faster than it makes it, and unless something changes, the generative AI pioneer could be on the verge of a complete collapse. That is, unless one of these Hail Marys can save the company.

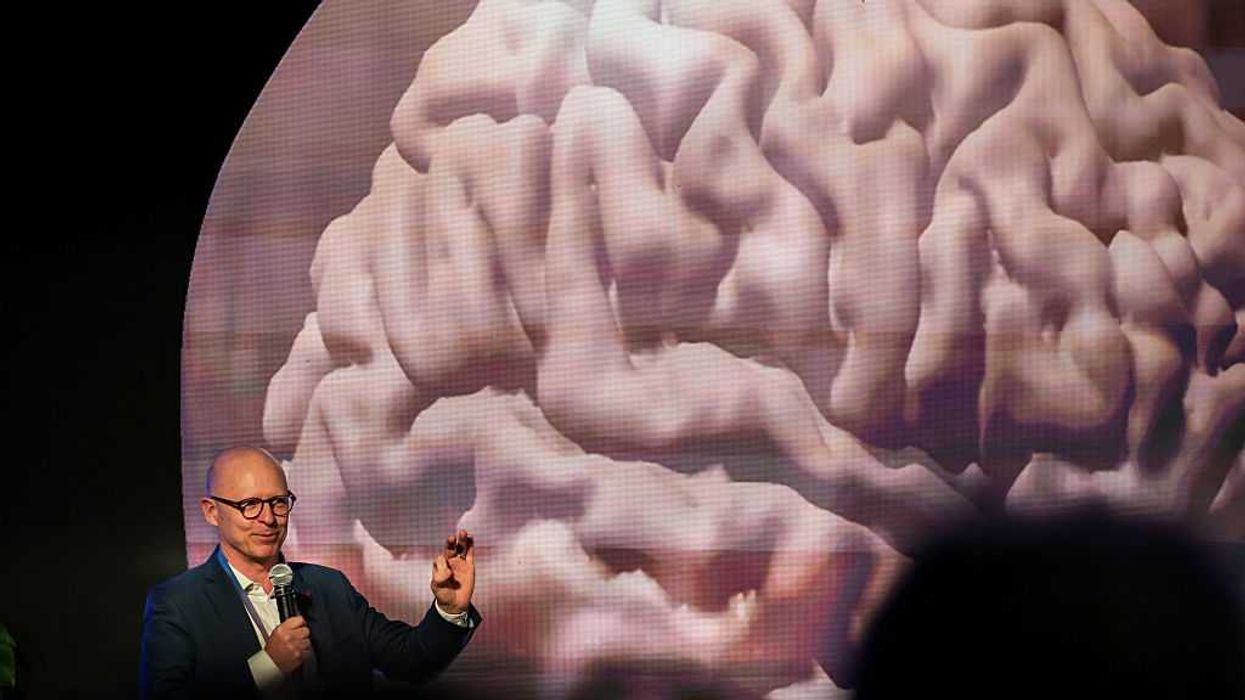

RELATED: GOD-TIER AI? Why there’s no easy exit from the human condition

Photo By David Zorrakino/Europa Press via Getty Images

Photo By David Zorrakino/Europa Press via Getty Images

The bid to save OpenAI

OpenAI is currently looking into several potential revenue streams to turn its financial woes around. There’s no telling which ones will pan out quite yet, but these are the options we know so far:

For-profit restructure

When OpenAI first emerged, it was a nonprofit company with the goal to improve humanity through generative AI. Fast-forward to October 2025 — OpenAI is now a for-profit organization with a separate nonprofit group called the OpenAI Foundation. While the move will allow OpenAI’s profit arm to increase its earning potential and raise vital capital, it also received a fair share of criticism, especially from Elon Musk, who filed a lawsuit against OpenAI for reneging on its original promise.

A record-breaking IPO

Another big perk of its new for-profit restructure, OpenAI now has the power to go public on the stock market. According to an exclusive report published by Reuters in late October, OpenAI is putting the puzzle pieces together for a record-breaking IPO that could be worth up to $1 trillion. Not only would the move make OpenAI a publicly traded company with stock options, it would also give it more access to capital and acquisitions to further bolster its products, services, and economic stability.

Ad monetization

Online ads are the lifeblood of many online websites and services, from Google to social media apps like Facebook to mainstream media and more. While AI platforms have largely stayed away from injecting ads into their results, OpenAI CEO Sam Altman recently said that he’s “open to accepting a transaction fee” for certain queries.

In his ideal ad model, OpenAI could potentially take a cut of any products or services that users look for and buy through ChatGPT. This structure is different from how Google operates, by letting companies pay to bring their products to the top of search results, even if the products they sell are poorly made. Altman believes that his structure is better for users and would foster greater trust in ChatGPT.

Government projects and deals

While Altman recently denied that he’s seeking a government bailout for OpenAI’s financial troubles, the company can still benefit from government deals and projects, the most recent one being Stargate. As a new initiative backed by some of the biggest players in the AI space, Stargate will give OpenAI access to greater computing power, training resources, and owned infrastructure to lower expenses and increase the speed of innovation as they work on future AI models.

If OpenAI fails …

While OpenAI has several monetization options on the table — and perhaps even more that we don’t know about yet — none of them are a magic bullet that’s guaranteed to work. The company could still collapse, which brings us to our question at the top of the article: What happens to the generative AI market if OpenAI fails?

In a world where OpenAI fizzles entirely, there are several other platforms that will likely fill the void. Google is the top contender, thanks to the huge progress it made with Gemini 3, but Meta, xAI, Anthropic, Perplexity, and more will all want a piece.

That said, OpenAI isn’t the only AI platform struggling to make money. According to Harvard Business Review, the AI business model simply isn’t profitable, largely due to high maintenance costs, huge salaries for top AI talent, and a low-paying subscriber base. In order to keep the generative AI dream alive, companies will need a consistent flow of capital, a resource that’s more accessible for established companies with diverse product portfolios — like Google and Meta — while the new companies that only build LLMs (OpenAI and Claude) will continue to struggle.

At this stage in the AI race, there’s no doubt in my mind that the whole generative AI market is a big bubble waiting to burst. At the same time, AI products have been so fervently foisted on society that it all feels too big to fail. With huge initiatives like Stargate poised to beat China and other foreign nations to artificial general intelligence AGI, the AI race will continue, even if OpenAI no longer leads the charge. If I were a betting man, though, I would guess that someone important finds a way to keep Sam Altman’s brain child afloat one way or another, even as all signs point toward OpenAI spending itself out of business.

Elon Musk and Jeff Bezos are racing to enclose Earth in an orbital computer factory

In Memphis, Tennessee, where Elon Musk’s xAI initiative spun up a “compute factory” of some 32,000 GPUs, the local grid could not sustain the demand. The solution was characteristic of the era: 14 mobile gas turbine generators, parked in a row, burning fossil fuel to feed the machine. It was a scene of brute industrial force, a reminder that the “cloud,” for all its ethereal branding, is a heavy, hot, loud thing. It requires acres of land for the servers, rivers of water for cooling, and enough electricity to power a small nation.

The appetite of AI is proving insatiable. To reach the next plateau of synthetic cognition, we must triple our electrical output and are constrained by our capacity to do so. And so, with the inevitability of water seeking a lower level, the gaze of Silicon Valley has drifted upward. If the earth is too small, too regulated, and too fragile to house the machines of the future, we shall instead build them in the sky.

The high ground of the 21st century is not a hill, but an orbit.

The proposal is startling, in the way that leaps in engineering often are. In late 2025, Musk noted on social media that SpaceX would be “doing” data centers in space. Jeff Bezos, a man who has long viewed the planetary surface as a sort of zoning restriction to be overcome, predicted gigawatt-scale orbital clusters within two decades.

The pitch is seductive: In the vacuum of low-Earth orbit, the sun never sets. There are no clouds, no rain, no neighbors to complain. There are only the burning fusion of the sun and the cold of deep space, which turns out to be the perfect medium for cooling the heated circuits of a neural network.

The vacuum is valuable because it is an infinite heat sink. The sunlight is valuable because it is free voltage. The plan, as outlined by startups such as Starcloud (formerly Lumen Orbit), involves structures that defy terrestrial intuition. These are not the tin-can satellites of the Cold War but solar arrays and radiator panels four kilometers wide, vast shimmering sheets assembled by swarms of robots. These machines, using technology like the MIT-developed TESSERAE tiles, would click together in the silence, building a cathedral of computation that no human hand will touch.

RELATED: Trump leaves Elon Musk’s Grok, xAI off White House list of AI partners

Photo by BRENDAN SMIALOWSKI/AFP via Getty Images

Photo by BRENDAN SMIALOWSKI/AFP via Getty Images

There is a stark beauty to the engineering. On Earth, a data center fights a losing battle against entropy, burning energy to pump heat away. In space, heat can be radiated into the dark. A server rack in orbit, shielded by layers of polymer and perhaps submerged in fluid to dampen the cosmic rays, swims in a bath of eternal starlight, crunching the data beamed up from below. Companies such as NTT and Sky Perfect JSAT envision optical lasers linking these satellites into a single, glowing lattice: a cosmic village of information.

Yet one cannot help but observe its fragility. The modern GPU is a miracle of nanometer-scale lithography, a device so sensitive that a stray alpha particle can induce a chaotic error. The environment of space is hostile, awash in the very radiation that these chips abhor. To place the most delicate artifacts of human civilization into the harshest environment known to physics is a gamble. The engineers speak of “annealing” solar cells and triple-redundant logic. The skeptic notes that a bit-flip in a language model is a nuisance, while a bit-flip in a battle management system is a tragedy.

There is also the matter of the debris. We have already cluttered orbits with the husks of our previous ambitions: spent rocket stages, dead weather satellites, flecks of paint moving at 17,000 miles per hour. To introduce massive, kilometer-scale structures is to invite the Kessler syndrome, a cascade of collisions that could imprison us on the surface for generations. We are proposing to solve the environmental crisis of terrestrial computing by potentially creating an environmental crisis in the exosphere. It is the American way, the frontier way: When one room gets messy, simply move to the next, larger room.

The drive to do this is not merely economic, though the economics are potent. If Starship can lower the cost of launch to under $200 per kilogram, the math begins to close. If energy in space is effectively free, the initial capital outlay is justified by the lack of a monthly utility bill. But the impulse is also older, that of the Russian scientist and mathematician Konstantin Tsiolkovsky, who called Earth the “cradle” of humanity, which, like a mature human being, eventually we must leave. We are seeing the embryonic stages of the “noosphere,” a sphere of pure mind encircling the planet. By exporting our cognition to the heavens, we are externalizing our logic. The logos of our civilization will physically reside above us, a silent pantheon of servers ordering and facilitating the lives of the creatures below.

There is a geopolitical texture to this as well. The concept of “sovereign cloud” takes on a new meaning when the data center is orbiting over international waters. Intelligence agencies and defense contractors are quietly investing, sensing that the high ground of the 21st century is not a hill, but an orbit. To control the compute is to control the speed of thought.

Whether this will work remains to be seen. The history of spaceflight is a graveyard of optimistic PowerPoints. It is possible that the radiation will act as a slow acid on the silicon, that the robotic assembly will jam, that the cost will remain stubbornly high. But the momentum is real. The mobile gas turbines in Memphis are a stopgap. The data centers consuming the aquifers of Arizona are a liability. The logic of the market and the machine points upward.

We stand at a peculiar intersection. We are attempting to use the most primal forces of the solar system, the burning star and the freezing void, to power our most refined tools. It is a grand, ambitious, and entirely human endeavor. We are building a computer in a jar and hanging the jar in the sky, hoping that the view will be clear enough to see the future.

Nvidia CEO Jensen Huang Praises Trump on Joe Rogan Podcast: ‘Very Practical, Common Sense, and Logical’

Nvidia CEO Jensen Huang praised President Donald Trump on Wednesday, telling podcaster Joe Rogan that “everything” the president “thinks through is very practical, common sense, and logical.” Jensen also credited Trump’s energy policy with saving AI, telling Rogan, “Without energy growth, we can have no industrial growth. And that was what saved the AI industry.”

The post Nvidia CEO Jensen Huang Praises Trump on Joe Rogan Podcast: ‘Very Practical, Common Sense, and Logical’ appeared first on Breitbart.

Rejoice, Jared Leto fans! Time to fall asleep on your couch watching ‘Tron: Ares’

This week, “Tron: Ares,” the blockbuster that wasn’t, makes its final bid for profitability — hitting the streaming services, complete with a bonus deleted scene. As Hollywood continues its messy quest to restore its lost glory, what better time for a postmortem?

“Tron: Ares” tells us much. This trilogy-completing movie should have been a layup. With the film, Disney had a great existing piece of intellectual property and a time that could not have been better for a sequel. You can’t go a day on the internet without hearing about AI, surveillance, data centers, hacking, and other topics that the “Tron” universe is uniquely qualified to address.

I need to defend Jared Leto for a second.

The question was: Could Disney pull off a sequel to a pair of movies released in 1982 and 2010 while delivering a quality film that made compelling points on the future of Big Tech and the ever-changing interplay between AI and humanity? The Disney modus operandi is usually to serve up a disappointing experience of woke talking points, lazy writing, and uninspired filmmaking. “Tron: Ares” offered the studio the chance to buck that trend.

The centerpiece of the “Tron” universe is a digital world called the Grid. For the uninitiated, this alternate world, existing inside computer systems, appears as a neon-lit, mirror-smooth alternative to our own. Computer programs inhabit humanoid forms and live in strict, hierarchical societies.

Its well-crafted lore merits a catch-up. In the original “Tron” movie (1982), brilliant programmer Kevin Flynn is attempting to hack into the system of his former employer, ENCOM, to prove that another employee, Ed Dillinger, plagiarized Flynn’s work to get ahead at the company. Flynn ends up getting transported onto the Grid via particle laser and battles the Master Control Program that is attempting to influence the real world. He is successful, proves that Dillinger plagiarized his work, and ends up as CEO of ENCOM. The franchise gets its title from a program named Tron, which fights alongside Flynn.

In “Tron: Legacy” (2010), Kevin Flynn expands his Grid and ends up getting stuck there, vanishing from the real world. His son Sam has inherited control of ENCOM, now a top tech company, but refuses to step into a leadership role. He goes looking for his father and ends up having his own adventure on the Grid, working alongside his father to outwit Clu, the program that betrayed his father and took control of the Grid. His father sacrifices himself to allow Sam to escape back to the real world along with Quorra, a female “isomorphic algorithm.” That is, a computer program manifested onto the Grid without any human contribution. Sam and Quorra end the film setting out to make the world a better place with the grid technology.

High concept, low plot

Here’s where the slapdash takes over from the archetypal. “Tron: Ares” picks up 15 years later with a healthy dose of the now-classic Disney bait and switch. Forget Sam, Quorra, Tron, or any of the popular characters from the previous installments. Sam, in a “somehow, Palpatine returned”-level move, has “left ENCOM for personal reasons.” Instead, we are introduced to his replacement: Eve Kim (Greta Lee). The bait and switch, along with other now-classic Disney tropes, is present throughout the film, but more on that later.

Let’s break down the plot. (Warning: inevitable spoilers below.)

RELATED: Bad performance or bad politics? A list of the most hated actors

Photo by Jean Catuffe/GC Images

Photo by Jean Catuffe/GC Images

There are two massive tech companies: ENCOM Technologies, now run by Eve Kim, and Dillinger Systems, run by Julian Dillinger, the grandson of Ed Dillinger from the first movie. These companies have figured out how to use particle lasers to bring things from the Grid into the real world. They basically just 3D-print tanks, ships, trees, people, anything at all, using nothing but electricity. How does that actually work? Never mentioned.

These Grid creations only last 29 minutes before disintegrating into dust and reappearing on the Grid. Eve Kim is determined to solve this problem by finding the permanence code, which Kevin Flynn supposedly hid somewhere. She holes up in Flynn’s old hideout in Alaska and starts looking for it while her male assistant, Seth Flores (Arturo Castro), sits around eating breakfast burritos and complaining that she doesn’t pay enough attention to him. Meanwhile, Dillinger Systems is presenting its new Master Control Program, Ares (Jared Leto). Julian Dillinger leaves out the fact that Ares only lasts 29 minutes, for which he is reprimanded by his mother (Gillian Anderson), who provides the conscience and competence at Dillinger.

Eve finds the permanence code and successfully tests it, then gets a call from ENCOM’s necessarily diverse CTO Ajay Singh (Hasan Minhaj). He tells Eve that Dillinger has hacked ENCOM’s server and caused all sorts of damage. Basically, Julian learned that Eve has the permanence code, and he wants to get his toxic white hands on it.

The rest of the movie is a series of action scenes strung together by the bare bones of a story. Ares is sent into the real world to get the code from Eve. He comes close, forcing her to destroy the flash drive, but fails when he hits his 29-minute shot clock. Eve is then transported onto the Grid when another Dillinger agent shoots her with a particle gun. Once there, the code can be extracted from her now-digital mind. This process would kill her, but Julian orders Ares to proceed. Ares, who has shown signs of straying from his programming, goes rogue and helps her to escape, asking for the permanence code in return so that he can live in the real world. Eve agrees, and the rest of the movie is basically them running around trying to get the code (remember, Eve destroyed the drive, so now they have to find it again) before Dillinger’s new MCP, Athena (Jodie Turner-Smith), catches them.

They end up finding a way to access it on Kevin Flynn’s old computer and send Ares onto the original Grid from the first “Tron” movie. Once there, he meets Kevin Flynn, or rather some sort of aspect or memory of him — it is never made clear — and gets the permanence code after Flynn determines that he is suitably curious (or something) enough to become human. Meanwhile, Athena is determined to catch Eve and brings a few Grid vehicles into the real world for a rather underwhelming final battle.

Things wrap up when Ares arrives back in the real world just in time to save Eve, while Ajay and Seth hack the Dillinger mainframe and shut it down, disabling Athena, whose sympathetic death scene feels like a DEI box-check. The film concludes with Eve using the permanence code to lead ENCOM in transforming various industries and Ares wandering the world under cover, learning how to live among humans.

The good, the bad, and the utterly predictable

There are three main takeaways from this film, but first I need to defend Jared Leto for a second. I know there are plenty of reasons, professional and otherwise, for people to dislike Leto, and I’m not necessarily disagreeing with them. However, I thought his performance in this film was quite good. The physical choices he makes in portraying his AI character add a subtle, uncanny-valley aspect to Ares. The best part of the performance, though, is the vocal work. Leto manages to give a digital quality to Ares’ speech without resorting to crude robotic tones. He uses careful pitch and tone changes and curates his pauses to give the effect of an LLM responding to a prompt, without losing the organic quality of human voice and speech. It is very well done, and the delivery works perfectly with the dialogue written for his character.

I’ve seen a lot of complaints about the acting in “Tron: Ares,” and some of it is warranted. However, as is so often the case, people are blaming the actors when a large part of the problem is bad dialogue. Seriously, you try turning the line “I don’t like sand; it’s coarse and rough and irritating, and it gets everywhere. Not like here. Here everything is soft and smooth” into an earnest, romantic phrase. The acting in “Tron: Ares” is mostly fine, and in Leto’s case, it is very impressive. Sure, it’s not all amazing, but the dialogue is clearly the bigger issue. The exception is the portion written for Ares. I suppose feeding prompts into ChatGPT actually worked in that case.

Another issue is the Disney tropes that permeate the film. They didn’t bother me that much because they are so worn out at this point. There is, of course, the IP bait and switch, in which a studio baits an audience with a familiar IP, character, etc. and then switches it out for a DEI replacement. Throwing out the entire Flynn family and replacing them with a diverse CEO girlboss is the relevant example here. If you’re still falling for this move in A.D. 2025, let me just say, as a longtime “Star Wars” fan, you wouldn’t last an hour in the asylum where they raised me.

Like any modern Disney movie, “Ares” adheres to what we might call the CCCC: color and chromosome competence correlation. Eve is a woman of color and therefore exceedingly competent and driven. Her Hispanic assistant, Seth, is light enough to be belittled for his manhood, but diverse enough to be portrayed as a competent force for good. Ajay, the CTO, is an Indian man. His complexion is darker than Seth’s, making him more competent. The film makes certain we know it is Ajay who actually manages to get into the Dillinger mainframe. However, being Indian means he is not dark enough to be excluded from male penalties. Therefore, he gets a personality that is Kash Patel turned tech bro, and part of his competence and drive are outsourced to his female assistant, Erin.

The CCCC applies across the moral spectrum. Julian Dillinger might be an evil tech villain, but he is also a white man and cannot, therefore, be competent or have real authority. These qualities are supplied by his mother, Elisabeth. Athena, the program who takes over as the Dillinger MCP, is played by a black woman (get it — Black Athena?) and is therefore competent and driven. Her failure is not her fault, but the result of Ajay truth-nuking the Dillinger Grid. In “Ares,” these tropes were too worn out to be troubling; they were just boring. I’m tired of being able to predict films after a passing glance at the principal characters.

Like the tropes, the film’s treatment of AI is just boring. The “Tron” universe is full of interesting AI potential, but “Ares” doesn’t go for any of them. The permanence code, which is a double helix as opposed to regular binary code (maybe I’m just a tech neophyte, but I thought that was cool), is never explained or explored. There is no real attempt to look at what the 3D-printed Grid creations actually are and what makes them work. If you can digitize a person’s mind by bringing him onto the Grid, that opens up all kinds of fascinating possibilities. “Ares” does not explore any of these paths. Rather, it goes for the same old “what if AI started becoming human” line that is pretty worn out at this point. Gareth Edwards’ “The Creator” did the whole “you should empathize with AI when it acts human” routine much better, but it isn’t very convincing in that film, either. In “Tron: Ares,” the wasted potential just makes the result more frustrating, which brings us to the final point and the biggest issue I have with the film.

At the end of the day, “Tron: Ares” is slop. It is content conceived and designed to be just that and nothing more. AI could have written this film, which might be by design (in which case, my apologies, Jesse Wigutow, I was not familiar with your game), but I don’t think so. It is not just the lack of explanations or the fact that anyone with an IQ above room temperature could predict the entire film after 10 minutes. Everything in this film feels like it was cut and pasted from a general template for “popular high-budget sci-fi movie.”

Who will take these missed opportunities?

So what went wrong? Well, leaving aside the obvious “don’t be woke” talking point, the main issue was misunderstanding the sort of IP the filmmakers were dealing with. At its core, “Tron” is a story about computers, not just a sci-fi universe of shiny alternate realities. Ignoring this fact robs “Ares” of the necessary thematic continuity for any good sequel. Instead, the film relies on cheap nostalgia and throwaway references, refusing to use the unique set of tools it has to tell a compelling story.

To take just one example, the ability to digitize the human mind — that alone offers a more compelling and relevant story. If you can digitize the human person, storing people on the grid, what does that say about the human soul? What does it mean for surveillance, incarceration, and memory? In a time of privacy concerns and AI data-farm controversies, a computer server with the ability to store, alter, or destroy human consciousness — not to mention the capacity for independent evolution and generation — sets up a whole list of compelling questions, themes, and plot points.

If you want to understand what I’m getting at, compare the soundtrack — an album by Nine Inch Nails that sounds more like GPT — to the “Tron: Legacy” soundtrack by Daft Punk, a now-legendary, pitch-perfect expression of the computer/reality synthesis that the franchise just couldn’t live up to.

The soundtrack isn’t the only place where “Tron: Ares” is a downgrade from “Legacy.” So let me offer some advice: If you find yourself looking to stream an AI-themed sci-fi movie, just watch “Tron: Legacy.” It’s not perfect, but the soundtrack is great, the CGI holds up well, and the writing and acting actually bear the mark of real human beings.

Strap ’em on: From watches to glasses, snag our top wearables this Black Friday

The speed of tech is a formidable force, so we have paused to catch you up on the cutting-edge devices and gadgets you might want to bump to the top of your list if you’re hoping to speedrun Black Friday this year.

Best wearables to buy during Black Friday

Apple Watch Series 10 or 11

Apple Watch is one of the best-selling wearables on the planet, largely due to its customization options, iconic style, and wide range of fitness features. However, while Apple used to add fun new sensors and capabilities every year, newer Apple Watches have reached a point of innovation stagnation. Aside from battery life improvements, last year’s Series 10 has all the new features that landed on the Series 11, including high blood pressure detection and sleep score tracking, plus all the usual tricks like heart rate monitoring, ECG scans, blood oxygen levels, AFIB detection, and more.

There’s no telling how long the gadgets on your list will be on sale.

While I do recommend an Apple Watch for anyone in the Apple ecosystem, your money would be better spent on a Series 10, if you can find one. Otherwise, you’re looking at $399 MSRP or more for a Series 11.

The Series 11 looks great, but for your money, the Series 10 wins out.Photo courtesy of Apple

The Series 11 looks great, but for your money, the Series 10 wins out.Photo courtesy of Apple

Pixel Watch 3 or 4

On the Android side, Pixel Watch has quickly become one of the best wearables available. With Fitbit integration, heart rate tracking, daily readiness scores, and a host of other features, Pixel Watch is the best that Android users can buy. As for which model deserves a spot on your wrist (or list), last year’s Pixel Watch 3 is where the device really started to hit its stride, while the newest Pixel Watch 4 for $349.99 adds quality-of-life improvements (40 hours of battery life per charge and a larger domed display) that further refine the experience. You’d be safe with either one of these under the tree this season.

![]() The Pixel Watch 4: just like the 3, only better.Photo courtesy of Android

The Pixel Watch 4: just like the 3, only better.Photo courtesy of Android

Oura Ring 4

For anyone who wants an ultra-sleek or unconventional wearable fitness tracker, Oura Ring 4 is easily the best ring the company has ever made. With a new slimmer design, it looks more like a piece of jewelry than a tech gadget. It comes in a range of sizes and finishes from $249 to $499, and it tracks everything you’d expect from a larger smartwatch, including heart rate data, sleep and rest, and stress levels. Although Oura Ring is great for men and women, its added female health features make it especially great for the lady in your life.

Oura Ring 4 hits new highs.Photo courtesy of Oura

Oura Ring 4 hits new highs.Photo courtesy of Oura

One more thing: Speaking of Fitbit, it’s easy to recommend a Charge series fitness band or Versa watch to anyone looking to slim down in the New Year. However, hold off for now. Google recently confirmed that new devices are on the way soon, so only buy a Fitbit this week if you get a really good discount.

Try something totally new for Black Friday

For the more adventurous gift-giving type, a new product category is making waves in the tech space. From Apple to Google, Meta and more, everyone is trying their best to make augmented reality, virtual reality, and extended reality glasses, goggles, and headsets a thing. The category is still very young and OEMs are still trying to figure out exactly what users want, but if you’d like to try it out for yourself or with a loved one, here are a few devices to keep in mind.

Apple Vision Pro

Apple’s first foray into AR didn’t go so well. The first-generation Vision Pro was heavy, clunky, and very expensive. It didn’t sell in high numbers, either. However, that didn’t stop Apple from finally launching a sequel that hit shelves last month. With a much faster M5 chip and an improved dual-knit headband for comfort, the second-generation Vision Pro offers an immersive spatial computing experience that puts you directly inside your work, movies, and memories. If you ever wanted to know what it was like to wear an iPad on your face, this is the one to do it.

First was worst, second is best: the new Vision Pro.Photo courtesy of Apple

First was worst, second is best: the new Vision Pro.Photo courtesy of Apple

One more thing: Vision Pro is an impressive piece of tech, but keep in mind that developers have been slow to create apps for the headset. Nearly two years after the first version launched, several critical apps are still missing from the App Store, including YouTube, Netflix, and Spotify. At this point, there’s no telling if or when the platform will ever take off like iPhone, Apple Watch, and Mac, so only pick this one up if you’re really curious about AR/VR/XR.

RELATED: Fooled by fake videos? Unsure what to trust? Here’s how to tell what’s real.

Qilai Shen/Bloomberg via Getty Images

Qilai Shen/Bloomberg via Getty Images

Samsung Galaxy XR

Almost one full year ago, Google announced its glasses operating system called Android XR. Even then, the company hinted that the first Android XR device would come from Samsung, and after months of teases and unveils, it is finally here. Samsung Galaxy XR is Android’s first direct Apple Vision Pro competitor. Using the same concept — building a product that lets users dive directly into the action — Galaxy XR differentiates itself in several key ways. For starters, Gemini sits at the center of the user experience, helping users navigate the UI, pull up information, and learn more about whatever they see on their screens. The device itself is also lighter than Vision Pro, making it easier to wear for longer sessions. Android XR supports most apps already found on the Google Play Store, which means it does have access to YouTube, Netflix, and other entertainment apps, all ready to go.

Samsung’s Galaxy XR wants you scrolling past the Vision Pro.Photo courtesy of Samsung

Samsung’s Galaxy XR wants you scrolling past the Vision Pro.Photo courtesy of Samsung

One more thing: While Samsung Galaxy XR is an interesting alternative to Apple Vision Pro, its underlying software is brand-new. Developers will likely make tweaks and squash bugs as they flesh out the feature list for Android XR. It’s also worth noting that Google has a reputation for killing projects early if they don’t amass a large user base within the first several years. In other words, if the Samsung Galaxy XR isn’t a success, Android XR may get the axe sooner than later. No one has a crystal ball, though, so it’s hard to predict what will happen until a bit more time has passed.

Ray-Ban Meta Glasses (Gen 2)

Where Apple Vision and Samsung Galaxy XR are meant to be worn while sitting down in a controlled space, Ray-Ban Meta Glasses (Gen 2) are smart glasses that are meant to be worn with you out in the world. These don’t have displays, but they have built-in cameras controlled by an AI assistant that can see what you see and tell you about the world around you in real time. Ask it about the architecture of a building, capture high-quality videos and photos of memories as they happen in front of you, or play music through the built-in open-air speakers. If you ever wanted an AI assistant for your face, Ray-Ban Meta Glasses (Gen 2) are a good place to start.

Play it cool with the new Meta Glasses, and you might not get the wrong kind of stares.Photo courtesy of Ray-Ban/Meta

Play it cool with the new Meta Glasses, and you might not get the wrong kind of stares.Photo courtesy of Ray-Ban/Meta

Let the deals begin!

The Black Friday deals have already started to roll out, and many of them will carry into Cyber Monday and the weeks leading up to Christmas. Still, there’s no telling how long the gadgets on your list will be on sale, so grab them sooner rather than later to make sure you have exactly what you want under the tree.

Happy Black Friday weekend and merry Christmas!

Elon Musk’s Vision of the Future: Work Is ‘Optional,’ Money Is Irrelevant as AI and Robots Take Over Everything

Elon Musk shared his vision of the future at the U.S.-Saudi Investment Forum in Washington, DC this week. According to the tech tycoon, work will be optional and money will lose all meaning as AI-powered robots take over every aspect of the economy over the next two decades.

The post Elon Musk’s Vision of the Future: Work Is ‘Optional,’ Money Is Irrelevant as AI and Robots Take Over Everything appeared first on Breitbart.

GOD-TIER AI? Why there’s no easy exit from the human condition

Many working in technology are entranced by a story of a god-tier shift that is soon to come. The story is the “fast takeoff” for AI, often involving an “intelligence explosion.” There will be a singular moment, a cliff-edge, when a machine mind, having achieved critical capacities for technical design, begins to implement an improved version of itself. In a short time, perhaps mere hours, it will soar past human control, becoming a nearly omnipotent force, a deus ex machina for which we are, at best, irrelevant scenery.

This is a clean narrative. It is dramatic. It has the terrifying, satisfying shape of an apocalypse.

It is also a pseudo-messianic myth resting on a mistaken understanding of what intelligence is, what technology is, and what the world is.

The world adapts. The apocalypse is deferred. The technology is integrated.

The fantasy of a runaway supermind achieving escape velocity collides with the stubborn, physical, and institutional realities of our lives. This narrative mistakes a scalar for a capacity, ignoring the fact that intelligence is not a context-free number but a situated process, deeply entangled with physical constraints.

The fixation on an instantaneous leap reveals a particular historical amnesia. We are told this new tool will be a singular event. The historical record suggests otherwise.

Major innovations, the ones that truly resculpted civilization, were never events. They were slow, messy, multi-decade diffusions. The printing press did not achieve the propagation of knowledge overnight; its revolutionary power was in the gradual enabling of the secure communication of information, which in turn allowed knowledge to compound. The steam engine unfolded over generations, its deepest impact trailing its invention by decades.

With each novel technology, we have seen a similar cycle of panic: a flare of moral alarm, a set of dire predictions, and then, inevitably, the slow, grinding work of normalization. The world adapts. The apocalypse is deferred. The technology is integrated. There is little reason to believe this time is different, however much the myth insists upon it.

The fantasy of a fast takeoff is conspicuously neat. It is a narrative free of friction, of thermodynamics, of the intractable mess of material existence. Reality, in contrast, has all of these things. A disembodied mind cannot simply will its own improved implementation into being.

Photo by Arda Kucukkaya/Anadolu via Getty Images

Photo by Arda Kucukkaya/Anadolu via Getty Images

Any improvement, recursive or otherwise, encounters physical limits. Computation is bounded by the speed of light. The required energy is already staggering. Improvements will require hardware that depends on factories, rare minerals, and global supply chains. These things cannot be summoned by code alone. Even when an AI can design a better chip, that design will need to be fabricated. The feedback loop between software insight and physical hardware is constrained by the banal, time-consuming realities of engineering, manufacturing, and logistics.

The intellectual constraints are just as rigid. The notion of an “intelligence explosion” assumes that all problems yield to better reasoning. This is an error. Many hard problems are computationally intractable and provably so. They cannot be solved by superior reasoning; they can only be approximated in ways subject to the limits of energy and time.

Ironically, we already have a system of recursive self-improvement. It is called civilization, employing the cooperative intelligence of humans. Its gains over the centuries have been steady and strikingly gradual, not explosive. Each new advance requires more, not less, effort. When the “low-hanging fruit” is harvested, diminishing returns set in. There is no evidence that AI, however capable, is exempt from this constraint.

Central to the concept of fast takeoff is the erroneous belief that intelligence is a singular, unified thing. Recent AI progress provides contrary evidence. We have not built a singular intelligence; we have built specific, potent tools. AlphaGo achieved superhuman performance in Go, a spectacular leap within its domain, yet its facility did not generalize to medical research. Large language models display great linguistic ability, but they also “hallucinate,” and pushing from one generation to the next requires not a sudden spark of insight, but an enormous effort of data and training.

The likely future is not a monolithic supermind but an AI service providing a network of specialized systems for language, vision, physics, and design. AI will remain a set of tools, managed and combined by human operators.

To frame AI development as a potential catastrophe that suddenly arrives swaps a complex, multi-decade social challenge for a simple, cinematic horror story. It allows us to indulge in the fantasy of an impending technological judgment, rather than engage with the difficult path of development. The real work will be gradual, involving the adaptation of institutions, the shifting of economies, and the management of tools. The god-machine is not coming. The world will remain, as ever, a complex, physical, and stubbornly human affair.

Trump and Elon want TRUTH online. AI feeds on bias. So what’s the fix?

The Trump administration has unveiled a broad action plan for AI (America’s AI Action Plan). The general vibe is one of treating AI like a business, aiming to sell the AI stack worldwide and generate a lock-in for American technology. “Winning,” in this context, is primarily economic. The plan also includes the sorely needed idea of modernizing the electrical grid, a growing concern due to rising electricity demands from data centers. While any extra business is welcome in a heavily indebted nation, the section on the political objectivity of AI is both too brief and misunderstands the root cause of political bias in AI and its role in the culture war.

The plan uses the term “objective” and implies that a lack of objectivity is entirely the fault of the developer, for example:

Update Federal procurement guidelines to ensure that the government only contracts with frontier large language model (LLM) developers who ensure that their systems are objective and free from top-down ideological bias.

The fear that AIs might tip the scales of the culture war away from traditional values and toward leftism is real. Try asking ChatGPT, Claude, or even DeepSeek about climate change, where COVID came from, or USAID.

Training data is heavily skewed toward being generated during the ‘woke tyranny’ era of the internet.

This desire for objectivity of AI may come from a good place, but it fundamentally misconstrues how AIs are built. AI in general and LLMs in particular are a combination of data and algorithms, which further break down into network architecture and training methods. Network architecture is frequently based on stacking transformer or attention layers, though it can be modified with concepts like “mixture of experts.” Training methods are varied and include pre-training, data cleaning, weight initialization, tokenization, and techniques for altering the learning rate. They also include post-training methods, where the base model is modified to conform to a metric other than the accuracy of predicting the next token.

Many have complained that post-training methods like Reinforcement Learning from Human Feedback introduce political bias into models at the cost of accuracy, causing them to avoid controversial topics or spout opinions approved by the companies — opinions usually farther to the left than those of the average user. “Jailbreaking” models to avoid such restrictions was once a common pastime, but it is becoming harder, as corporate safety measures, sometimes as complex as entirely new models, scan both the input to and output from the underlying base model.

As a result of this battle between RLHF and jailbreakers, an idea has emerged that these post-training methods and safety features are how liberal bias gets into the models. The belief is that if we simply removed these, the models would display their true objective nature. Unfortunately for both the Trump administration and the future of America, this is only partially correct. Developers can indeed make a model less objective and more biased in a leftward direction under the guise of safety. However, it is very hard to make models that are more objective.

The problem is data

According to Google AI Mode vs. Traditional Search & Other LLMs, the top domains cited in LLMs are: Reddit (40%), YouTube (26%), Wikipedia (23%), Google (23%), Yelp (21%), Facebook (20%), and Amazon (19%).

This seems to imply a lot of the outside-fact data in AIs comes from Reddit. Spending trillions of dollars to create an “eternal Redditor” isn’t going to cure cancer. At best, it might create a “cure cancer cheerleader” who hypes up every advance and forgets about it two weeks later. One can only do so much in the algorithm layer to counteract the frame of mind of the average Redditor. In this sense, the political slant of LLMs is less due to the biases of developers and corporations (although they do exist) and more due to the biases of the training data, which is heavily skewed toward being generated during the “woke tyranny” era of the internet.

In this way, the AI bias problem is not about removing bias to reveal a magic objective base layer. Rather, it is about creating a human-generated and curated set of true facts that can then be used by LLMs. Using legislation to remove the methods by which left-leaning developers push AIs into their political corner is a great idea, but it is far from sufficient. Getting humans to generate truthful data is extremely important.

The pipeline to create truthful data likely needs at least four steps.

1. Raw data generation of detailed tables and statistics (usually done by agencies or large enterprises).

2. Mathematically informed analysis of this data (usually done by scientists).

3. Distillation of scientific studies for educated non-experts (in theory done by journalists, but in practice rarely done at all).

4. Social distribution via either permanent (wiki) or temporary (X) channels.

This problem of truthful data plus commentary for AI training is a government, philanthropic, and business problem.

RELATED: Threads is now bigger than X, and that’s terrible for free speech

Photo by Lionel BONAVENTURE/AFP/Getty Images

Photo by Lionel BONAVENTURE/AFP/Getty Images

I can imagine an idealized scenario in which all these problems are solved by harmonious action in all three directions. The government can help the first portion by forcing agencies to be more transparent with their data, putting it into both human-readable and computer-friendly formats. That means more CSVs, plain text, and hyperlinks and fewer citations, PDFs, and fancy graphics with hard-to-find data. FBI crime statistics, immigration statistics, breakdowns of government spending, the outputs of government-conducted research, minute-by-minute election data, and GDP statistics are fundamentally pillars of truth and are almost always politically helpful to the broader right.

In an ideal world, the distillation of raw data into causal models would be done by a team of highly paid scientists via a nonprofit or a government contract. This work is too complex to be left to the crowd, and its benefits are too distributed to be easily captured by the market.

The journalistic portion of combining papers into an elite consensus could be done similarly to today: with high-quality, subscription-based magazines. While such businesses can be profitable, for this content to integrate with AI, the AI companies themselves need to properly license the data and share revenue.

The last step seems to be mostly working today, as it would be done by influencers paid via ad revenue shares or similar engagement-based metrics. Creating permanent, rather than disappearing, data (à la Wikipedia) is a time-intensive and thankless task that will likely need paid editors in the future to keep the quality bar high.

Freedom doesn’t always boost truth

However, we do not live in an ideal world. The epistemic landscape has vastly improved since Elon Musk’s purchase of Twitter. At the very least, truth-seeking accounts don’t have to deal with as much arbitrary censorship. Even other media have made token statements claiming they will censor less, even as some AI “safety” features are ramped up to a much higher setting than social media censorship ever was.

The challenge with X and other media is that tech companies generally favor technocratic solutions over direct payment for pro-social content. There seems to be a widespread belief in a marketplace of ideas: the idea that without censorship (or with only some person’s favorite censorship), truthful ideas will win over false ones. This likely contains an element of truth, but the peculiarities of each algorithm may favor only certain types of truthful content.

“X is the new media” is a commonly spoken refrain. Yet both anonymous and public accounts on X are implicitly burdened with tasks as varied and complex as gathering election data, creating long think pieces, and the consistent repetition of slogans reinforcing a key message. All for a chance of a few Elon bucks. They are doing this while competing with stolen-valor thirst traps from overseas accounts. Obviously, most are not that motivated and stick to pithy and simple content rather than intellectually grounded think pieces. The broader “right” is still needlessly ceding intellectual and data-creation ground to the left, despite occasional victories in defunding anti-civilizational NGOs and taking control of key platforms.

The other issue experienced by data creators across the political spectrum is the reliance on unpaid volunteers. As the economic belt inevitably tightens and productive people have less spare time, the supply of quality free data will worsen. It will also worsen as both platforms and users feel rightful indignation at their data being “stolen” by AI companies making huge profits, thus moving content into gatekept platforms like Discord. While X is unlikely to go back to the “left,” its quality can certainly fall farther.

Even Redditors and Wikipedia contributors provide fairly complex, if generally biased, data that powers the entire AI ecosystem. Also for free. A community of unpaid volunteers working to spread useful information sounds lovely in principle. However, in addition to the decay in quality, these kinds of “business models” are generally very easy to disrupt with minor infusions of outside money, if it just means paying a full-time person to post. If you are not paying to generate politically powerful content, someone else is always happy to.

The other dream of tech companies is to use AI to “re-create” the entirety of the pipeline. We have heard so much drivel about “solving cancer” and “solving science.” While speeding up human progress by automating simple tasks is certainly going to work and is already working, the dream of full replacement will remain a dream, largely because of “model collapse,” the situation where AIs degrade in quality when they are trained on data generated by themselves. Companies occasionally hype up “no data/zero-knowledge/synthetic data” training, but a big example from 10 years ago, “RL from random play,” which worked for chess and Go, went nowhere in games as complex as Starcraft.

So where does truth come from?

This brings us to the recent example of Grokipedia. Perusing it gives one a sense that we have taken a step in the right direction, with an improved ability to summarize key historical events and medical controversies. However, a number of articles are lifted directly from Wikipedia, which risks drawing the wrong lesson. Grokipedia can’t “replace” Wikipedia in the long term because Grok’s own summarization is dependent on it.

Like many of Elon Musk’s ventures, Grokipedia is two steps forward, one step back. The forward steps are a customer-facing Wikipedia that seems to be of higher quality and a good example of AI-generated long-form content that is not mere slop, achieved by automating the tedious, formulaic steps of summarization. The backward step is a lack of understanding of what the ecosystem looks like without Wikipedia. Many of Grokipedia’s articles are lifted directly from Wikipedia, suggesting that if Wikipedia disappears, it will be very hard to keep neutral articles properly updated.

Even the current version suffers from a “chicken and egg” source-of-truth problem. If no AI has the real facts about the COVID vaccine and categorically rejects data about its safety or lack thereof, then Grokipedia will not be accurate on this topic unless a fairly highly paid editor researches and writes the true story. As mentioned, model collapse is likely to result from feeding too much of Grokipedia to Grok itself (and other AIs), leading to degradation of quality and truthfulness. Relying on unpaid volunteers to suggest edits creates a very easy vector for paid NGOs to influence the encyclopedia.

The simple conclusion is that to be good training data for future AIs, the next source of truth must be written by people. If we want to scale this process and employ a number of trustworthy researchers, Grokipedia by itself is very unlikely to make money and will probably forever be a money-losing business. It would likely be both a better business and a better source of truth if, instead of being written by AI to be read by people, it was written by people to be read by AI.

Eventually, the domain of truth needs to be carefully managed, curated, and updated by a legitimate organization that, while not technically part of the government, would be endorsed by it. Perhaps a nonprofit NGO — except good and actually helping humanity. The idea of “the Foundation” or “Antiversity,” is not new, but our over-reliance on AI to do the heavy lifting is. Such an institution, or a series of them, would need to be bootstrapped by people willing to invest in our epistemic future for the very long term.

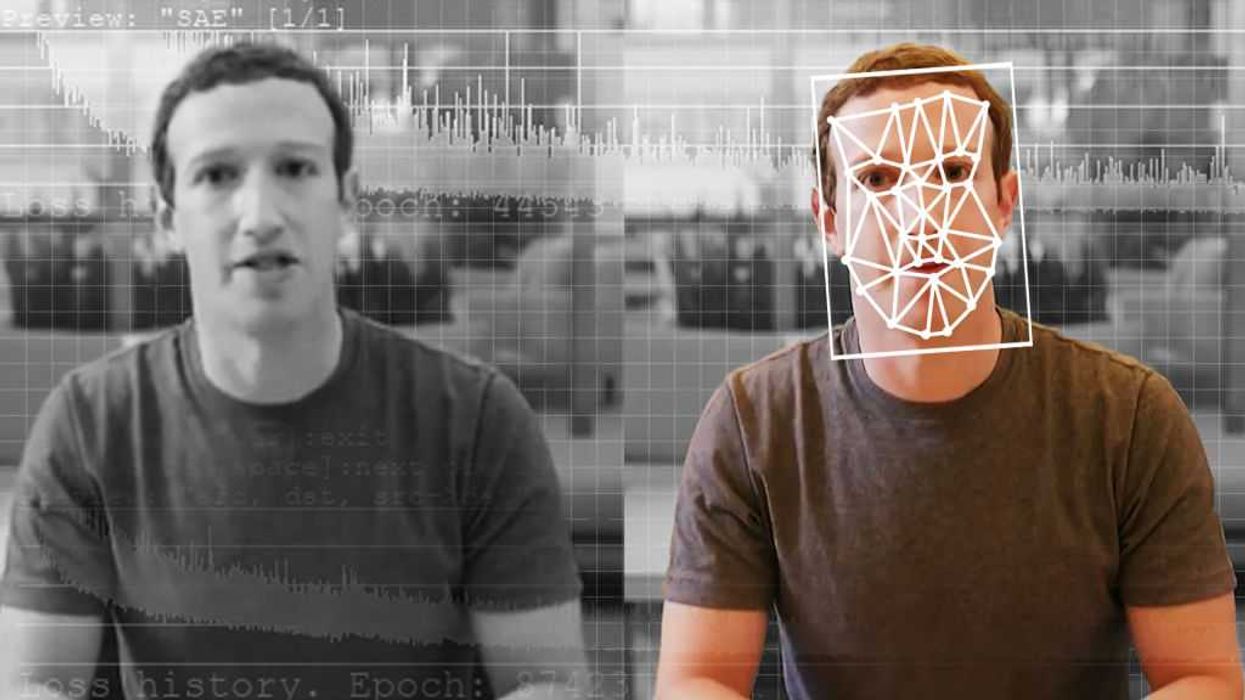

Fooled by fake videos? Unsure what to trust? Here’s how to to tell what’s real.

There’s a term for artificially generated content that permeates online spaces — creators call it AI slop, and when generative AI first emerged back in late 2022, that was true. AI photos and videos used to be painfully, obviously fake. The lighting was off, the physics were unrealistic, people had too many fingers or limbs or odd body proportions, and textures appeared fuzzy or glossy, even in places where it didn’t make sense. They just didn’t look real.

Many of you probably remember the nightmare fuel that was the early video of Will Smith eating spaghetti. It’s terrifying.

This isn’t the case any more. In just two short years, AI videos have become convincingly realistic to the point that deepfakes — content that perfectly mimics real people, places, and events — are now running rampant. For just one quick example of how far AI videos have come, check out Will Smith eating spaghetti, then and now.

None of it is real unless it is verifiable, and that is becoming increasingly hard to do.

Even the Trump administration recently rallied around AI-generated content, using it as a political tool to poke fun at the left and its policies. The latest entry portrayed AI Hakeem Jeffries wearing a sombrero while standing beside a miffed Chuck Schumer who is speaking a little more honestly than usual, a telltale sign that the video is fake.

While some AI-generated videos on the internet are simple memes posted in good fun, there is a darker side to AI content that makes the internet an increasingly unreliable place for truth, facts, and reality.

How to tell if an online video is fake

AI videos in 2025 are more convincing than ever. Not only do most AI video platforms pass the spaghetti-eating Turing test, but they have also solved many of the issues that used to run rampant (too many fingers, weird physics, etc.). The good news is that there are still a few ways to tell an AI video from a real one.

At least for now.

First, most videos created with OpenAI Sora, Grok Imagine, and Gemini Veo have clear watermarks stamped directly on the content. I emphasize “most,” because last month, violent Sora-generated videos cropped up online that didn’t have a watermark, suggesting that either the marks were manually removed or there’s a bug in Sora’s platform.

Your second-best defense against AI-generated content is your gut. We’re still early enough in the AI video race that many of them still look “off.” They have a strange filter-like sheen to them that’s reminiscent of watching content in a dream. Natural facial expressions and voice inflections continue to be a problem. AI videos also still have trouble with tedious or more complex physics (especially fluid motions) and special effects (explosions, crashing waves, etc.).

RELATED: Here’s how to get the most annoying new update off of your iPhone

Photo by: Nano Calvo/VW Pics/Universal Images Group via Getty Images

Photo by: Nano Calvo/VW Pics/Universal Images Group via Getty Images

At the same time, other videos, like this clip of Neil deGrasse Tyson, are shockingly realistic. Even the finer details are nearly perfect, from the background in Tyson’s office to his mannerisms and speech patterns — all of it feels authentic.

Now watch the video again. Look closely at what happens after Tyson reveals the truth. It’s clear that the first half of the video is fake, but it’s harder to tell if the second half is actually real. A notable red flag is the way the video floats on top of his phone as he pulls it away from the camera. That could just be a simple editing trick, or it could be a sign that the entire thing is a deepfake. The problem is that there’s no way to know for sure.

Why deepfakes are so dangerous

Deepfakes pose a real problem to society, and no one is ready for the aftermath. According to Statista, U.S. adults spend more than 60% of their daily screen time watching video content. If the content they consume isn’t real, this can greatly impact their perception of real-world events, warp their expectations around life, love, and happiness, facilitate political deception, chip away at mental health, and more.

Truth only exists if the content we see is real. False fabrications can easily twist facts, spread lies, and sow doubt, all of which will destabilize social media, discredit the internet at large, and upend society overall.

Deepfakes, however, are real, at least in the sense that they exist. Even worse, they are becoming more prevalent, and they are outright dangerous. They are a threat because they are extremely convincing and almost impossible to discern from reality. Not only can a deepfake be used to show a prominent figure (politicians, celebrities, etc.) doing or saying bad things that didn’t actually happen, but deepfakes can also be used as an excuse to cover up something a person actually did on film. The damage goes both ways, obfuscating the truth, ruining reputations, and cultivating chaos.

Soon, videos like the Neil deGrasse Tyson clip will become the norm, and the consequences will be utterly dire. You’ll see presidents declare war on other countries without uttering a real word. Foreign nations will drop bombs on their opponents without firing a shot, and terrorists will commit atrocities on innocent people that don’t exist. All of it is coming, and even though none of it will be real, we won’t be able to tell the difference between truth and lies. The internet — possibly even the world — will descend into turmoil.

Don’t believe everything you see online

Okay, so the internet has never been a bastion of truth. Since the dawn of dial-up, different forms of deception have crept throughout, bending facts or outright distorting the truth wholesale. This time, it’s a little different. Generative AI doesn’t just twist narratives to align with an agenda. It outright creates them, mimicking real life so convincingly that we’re compelled to believe what we see.

From here on out, it’s safe to assume that nothing on the internet is real — not politicians spewing nonsense, not war propaganda from some far-flung country, not even the adorable animal videos on your Facebook feed (sorry, Grandma!). None of it is real unless it is verifiable, and that is becoming increasingly hard to do in the age of generative AI. The open internet we knew is dead. The only thing you can trust today is what you see in person with your own eyes and the stories published by trusted sources online. Take everything else with a heaping handful of salt.

This is why reputable news outlets will be even more important in the AI future. If anyone can be trusted to publish real, authentic, truthful content, it should be our media. As for who in the press is telling the truth, Glenn Beck’s “liar, liar” test is a good place to start.

search

categories

Archives

navigation

Recent posts

- Texas Democratic Senate Hopeful James Talarico Sought To Create ‘New Generation of Climate Activists’ by Mandating Climate Change Lessons in Texas Schools April 10, 2026

- The judgment behind the abortion numbers April 10, 2026

- Heart Evangelista, ipinagtapat na si John Prats ang first love niya: ‘Kasi pinaiyak niya ‘ko’ April 10, 2026

- ‘Game of Thrones’ actor Michael Patrick passes away April 10, 2026

- Artemis II astronauts hurtle home from Moon toward splashdown April 10, 2026

- IN PHOTOS: Benilde wins NCAA Season 101 men’s volleyball crown April 10, 2026

- $1 million athlete: Jackie Young, Aces reportedly agree to historic deal April 10, 2026